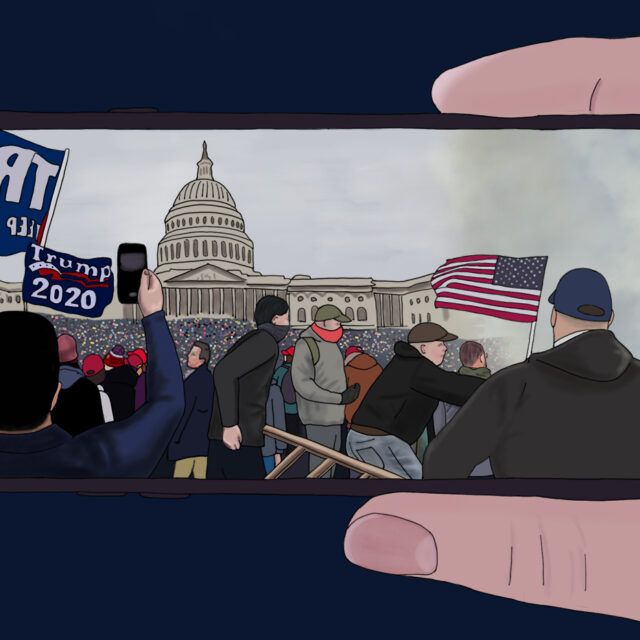

The violent riots at the Capitol were abetted and encouraged by posts on social media sites. But from a legal and practical standpoint, it’s often hard to hold social media companies responsible for their users, Northeastern professors say.

Jack McDevitt, director of the Institute on Race and Justice, argues that many of the posts amount to hate crimes—and that tech companies should be held responsible for violent rhetoric disseminated on their sites. But when it comes to spreading misinformation, exactly who is liable is less clear, says David Lazer, university distinguished professor of political science and computer and information sciences.

After last week’s storming of the Capitol, a number of tech companies have banned President Donald Trump from their platforms for making unsubstantiated claims about the election. And several big tech companies cut ties with Parler, an app protesters used to spread violent rhetoric in advance of the riot. The moves have sparked a debate about the responsibility of tech companies to monitor hate speech and misinformation on their sites.

For hate speech, “of course they’re responsible,” says McDevitt, director of the Institute on Race and Justice at Northeastern. “Free speech only goes so far. We can pass legislation that limits these sites. We have the means to hold them accountable, and we should.”

Prosecuting individuals or companies for hate speech or incitement of violence are hard cases to make, especially when social media is involved, McDevitt says. “But that doesn’t mean we shouldn’t try. Most of the laws were written to deal with traditional hate crimes. We need to update these the way we did with internet bullying where we make it something people can be held accountable for.”