By Zhen Guo

Background

This semester, I had the opportunity to collaborate with the INTERACT Animal Lab at Northeastern University on a project situated at the intersection of animal behavior and emerging technology. My work was conducted under the mentorship of Dr. Rébecca Kleinberger and in close partnership with a pre-vet student, Julia Van Buskirk. During our collaboration, I initially met with Dr. Kelinberger via zoom to learn more about the lab and the project. Then, I met monthly with Julia while connecting with her asynchronously via Discord.

Our work is grounded in the field of Animal-Computer Interaction (ACI), an interdisciplinary domain that has evolved from the established frameworks of Human-Computer Interaction (HCI). While HCI focuses on optimizing the interface between humans and digital systems, ACI shifts the design paradigm to recognize non-human animals as primary stakeholders and active users of technology. This field challenges traditional anthropocentric design by developing frameworks that prioritize animal agency, welfare, and multispecies communication.

The specific project I am contributing to involves studying the behavior of cats in home-based clinical settings to inform the development of veterinary telehealth software. By analyzing how feline patients respond to different exams conducted by their owners at home, we aim to build tools that better support remote medical assessments. Specifically, I supported Julia’s research by exploring digital humanities tools that could facilitate more efficient and accurate coding of behavior data.

The Coding Protocol: Bridging Observation and Data

In order to identify the most effective tool for this study, Julia and I conducted an in-depth review of her coding protocol for the video recording of the study sessions. Each study session is structured into three distinct stages. For each stage, the researcher must precisely code the movement levels of five different body parts of the patient (the cat). To translate these feline behaviors into actionable data, we utilize a standardized Cat Stress Score (CSS), ranging from 1 (Relaxed) to 7 (Terrified). Our coding protocol meticulously tracks five key indicators: Body Posture, Ventilation, Eyes and Pupils, Ears, and Tail movement.

For example, a cat at a CSS level of 1–2 presents a loosely extended posture with ears pointed upward and normal pupils. However, as the stress level increases toward a 6 or 7, we observe distinct physiological shifts: ventilation becomes rapid and shallow, the eyes transition to dilated “whale eyes,” and the ears become fully flattened against the head. By capturing these specific anatomical markers—from a relaxed tail gently wrapped around the body to a tense, thrashing tail—we can objectively quantify a cat’s transition from curious exploration to defensive, motionless behavior.

Beyond just observation, the goal is to export specific timestamps and coding data for overall analysis. We are essentially asking: Which components of a physical exam can be effectively performed at home? And do cats exhibit significantly lower stress markers in a home environment compared to a clinic?

Currently, the laboratory uses BORIS, an open-source software standard in behavioral research. However, the software presents significant challenges; its complex functionality and steep learning curve often hinder the efficiency of the coding process. My role has been to investigate alternative tools from the digital humanities that might offer a more intuitive user experience without sacrificing the precision required for scientific data collection.

In Search of the Perfect Interface

Selecting the right software required a balance between high-level functionality and researcher-friendly design. Our primary objective was to find a platform that allows fast data entry with minimal hand movement, which will significantly increase coding precision and efficiency. We also prioritized a streamlined user interface and effective onboarding tutorials to significantly flatten the learning curve for the researchers who are analyzing the data, ensuring the technology serves as a bridge rather than a barrier to the research. Lastly, given the sensitive nature of clinical observations, robust privacy protocols were non-negotiable to guarantee the security and privacy of our data.

Besides the usability requirements, we aimed to find open-source tools because of budget restrictions. This is challenging, because most of the free tools are under-maintained and have limitations towards the amount of data they can handle. Eventually, I managed to develop a list of available tools. Each fits a certain aspect of our coding protocol. In the following section, I will go over the pros and cons of the softwares I explored.

Software comparisons

ClipThoughts

ClipThoughts is a web-based video editing tool that allows you to upload a video or use urls for videos from third-party platforms. The interface shows you the video while allowing you to type in annotations on the timestamp.

Pros:

- Minimal Learning Curve: Features a straightforward, intuitive interface that allows researchers to start coding almost immediately.

- Effortless Data Export: Supports the seamless extraction of timestamps and annotations in standard text formats for further analysis.

- Ongoing Development: Benefits from an active user community and a consistent schedule of software updates.

Cons:

- Data Privacy Risks: Mandates video uploads to third-party cloud storage, which poses a security concern for sensitive research data.

- Inefficient Coding Workflow: Lacks keyboard shortcuts for rapid entry, requiring time-consuming manual input for every annotation.

- Single-Track Constraints: Offers no multi-track functionality, forcing researchers to overlay all five body-part codes on a single, crowded timeline.

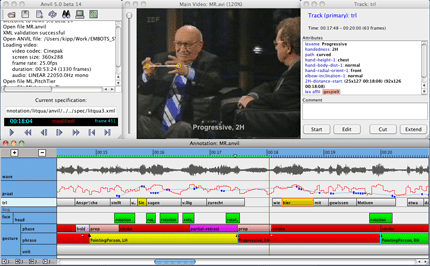

ANVIL

The latest version, AVIL 6, was last updated in 2017. Although the tool has stopped updating for a while, it is still available for download. Unfortunately, the current version was not able to load videos of the study due to its limitation on handling large data.

Datavyu

Datavyu is a tool dedicated for behavior scientists with efficiency and minimal hand-movement design. It consists of a data control panel, video, and data entry spread sheet.

Pros

- Simultaneous Multi-Video Support: Enables the synchronized labeling of multiple video files at once—a game-changer for our two-camera setup.

- Dedicated Multi-Column Layout: Provides separate tracks for different variables, allowing each feline body part to be coded in its own distinct column.

- Fully Customizable Coding Schemes: Offers the flexibility to build a bespoke protocol tailored to the unique sensory markers of feline stress.

- High-Speed Keyboard Shortcuts: Dramatically accelerates data entry by allowing the researcher to log behaviors through rapid-fire keystrokes.

- Versatile Multi-Format Export: Supports a wide range of file types for extracting annotations, ensuring seamless integration with statistical software.

- Air-Gapped Data Security: Functions entirely offline, allowing all analysis to be conducted on disconnected hardware for maximum privacy and ethical compliance.

Cons

- Limited Column Navigation: Lacks dedicated shortcuts for switching between active columns, which can create a bottleneck during high-speed coding sessions.

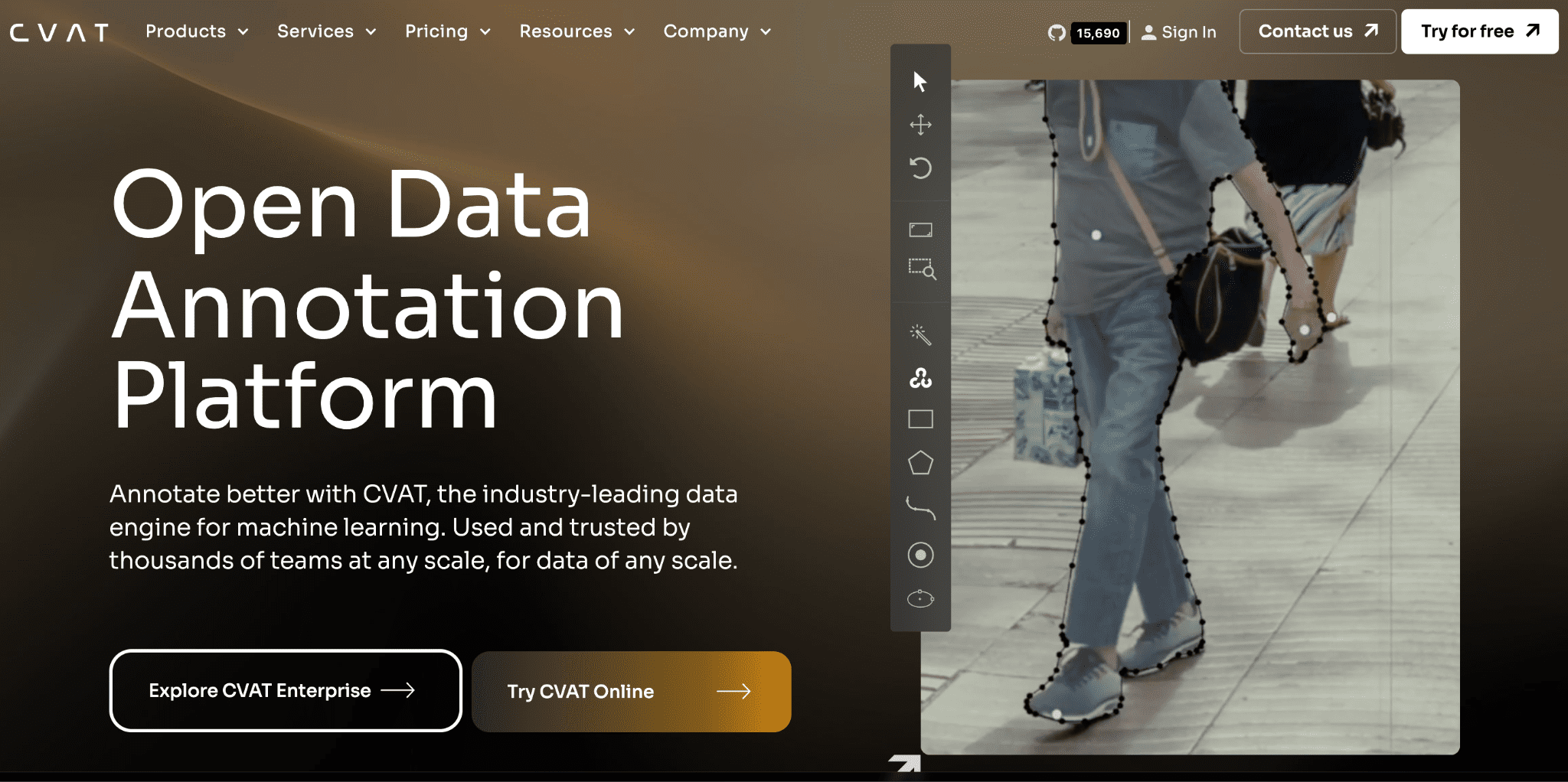

CVAT

CVAT is an AI-powered open-source tool that is primarily used for annotating data for machine learning training purposes.

Pros

- AI-Powered Subject Tracking: Utilizes AI-assisted labeling to automatically track subjects across frames, significantly reducing the manual labor of following a moving patient.

Cons

- Excessive Feature Complexity: Includes a vast array of specialized functions that far exceed the needs of a behavioral study, leading to a cluttered and overwhelming interface.

- Cloud-Dependent Security Risks: Requires users of the free tier to upload video files to third-party cloud storage, presenting a significant privacy barrier for sensitive clinical data.

Reflection

The quest for the perfect tool continues. Through this process, I’ve realized that most video annotation software today is built for one of two things: giving feedback to editors or feeding data to AI. When it comes to the specific, granular needs of behavioral studies, the options are surprisingly thin. Even the “top tier” paid tools are often so complex that they hinder rather than help the workflow.

Perhaps the most surprising takeaway was the “age gap” in the software—most of the tools currently used in behavioral labs were built nearly ten years ago. I am not sure about the reason why this is the case. While technology moves at lightning speed, these essential research tools seem frozen in time. It’s a reminder that as we move forward, we need more engineers to collaborate with scientists to build tools that prioritize the human (and feline) observers.