Snapchat (Snap Inc.)

The Evolution of Social Media Content Labeling: An Online Archive

As part of the Ethics of Content Labeling Project, we present a comprehensive overview of social media platform labeling strategies for moderating user-generated content. We examine how visual, textual, and user-interface elements have evolved as technology companies have attempted to inform users on misinformation, harmful content, and more.

Authors: Jessica Montgomery Polny, Graduate Researcher; Prof. John P. Wihbey, Ethics Institute/College of Arts, Media and Design

Last Updated: June 3, 2021

Snapchat Overview

Snap Inc. identifies itself as a “camera company,” in that the company primarily develops digital lenses, filters, virtual-reality software, and image rendering for the communication app Snapchat. The support page “How to Use Snapchat” provides an overview of the application’s functions. The purpose of the app is to provide dynamic communication, with videos and photos to be sent to friends as well as video-calling capabilities. Users can also post 24-hour ephemeral stories that can be viewed by their friends on the app.

Few content labels are implemented on Snapchat, but the platform aims to provide authoritative information through its public-facing feeds. This includes the Discover page that allows for exploration of news and curated content, and Spotlight for culture and entertainment highlights, such as award shows or concerts. Other pages curated by the platform provide users with relevant information on real-world events, including the U.S. 2020 elections and COVID-19.

Snapchat and Content Labeling

The Snapchat Community Guidelines regarding misinformation and harmful content focus on removal rather than labeling. The guidelines prohibit content that promotes “malicious deception and deliberately spreading false information that causes harm, such as denying the existence of tragic events.” Most content moderation by Snap Inc. is applied to the public-facing Discover feed, but the platform may also remove content that violates these guidelines if they were reported by users. Other areas subject to removal are hate speech and discrimination, which the platform considered to be contributors of harmful misinformation.

The Discover and Spotlight are public pages that host news and entertainment, and the community guidelines stated that the media partners with content on these pages are responsible for ensuring the accuracy of their content. Fact-checks on media outlets were conducted by third party organizations, independent of Snapchat moderation. Snapchat misinformation was sometimes published on fact-check organization pages, for content such as fake news or cross-platform disinformation campaigns that utilized Snapchat features.

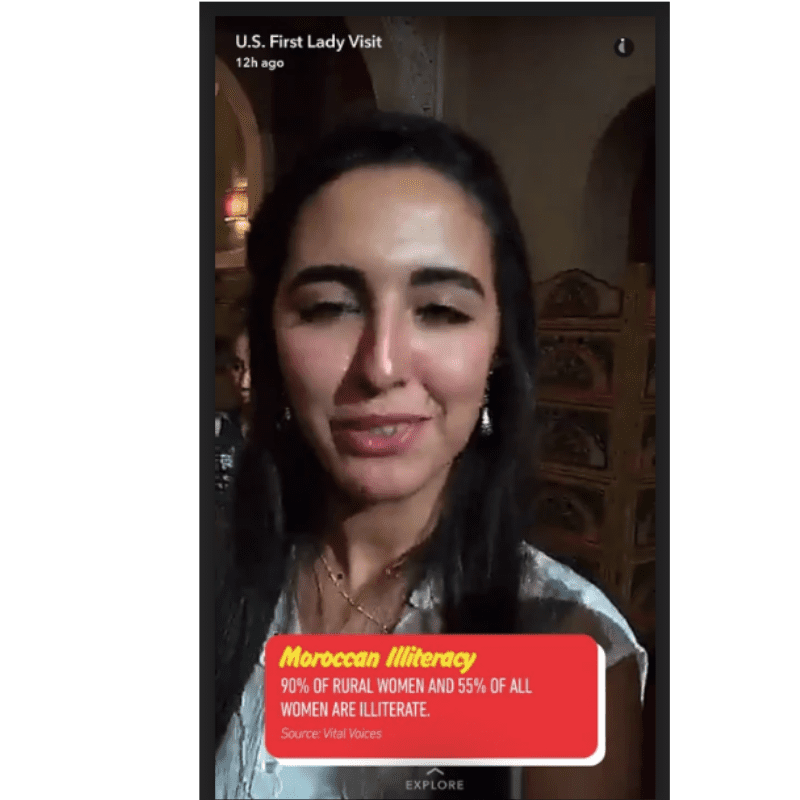

Misinformation was present with in-app content, as with the above example with false information on illiteracy in Morocco shared on the platform’s public Spotlight feed. While the independent fact-check was able to debunk the claim, Snap Inc. did not remove the content or assign penalties to the user before the snap organically disappeared. It is also important to note that the image manipulation features on Snapchat were also used as tools of misinformation. For instance, as seen above, a doctored image of former president Trump was created on Snapchat using a filter, and was shared throughout numerous other social media platforms to spread misinformation regarding his age and upbringing.

Snap Inc. CEO Evan Spiegel published an editorial with Axios in November 2017, “How Snapchat is separating social from media,” in which he characterized Snapchat as a communication application, as opposed to an open feed of media and threads. Rather than a feed or forum, users send ephemeral content to friends, or post 24-hour ephemeral stories. Curated content is meant to remain private among contacts, rather than re-posted and shared among feeds or groups. Spiegel claimed in Axios that false news and misinformation on Snapchat is less common than on social media apps, and that “content designed to be shared by friends is not necessarily content designed to deliver accurate information.”

Snapchat and the U.S. 2020 Elections

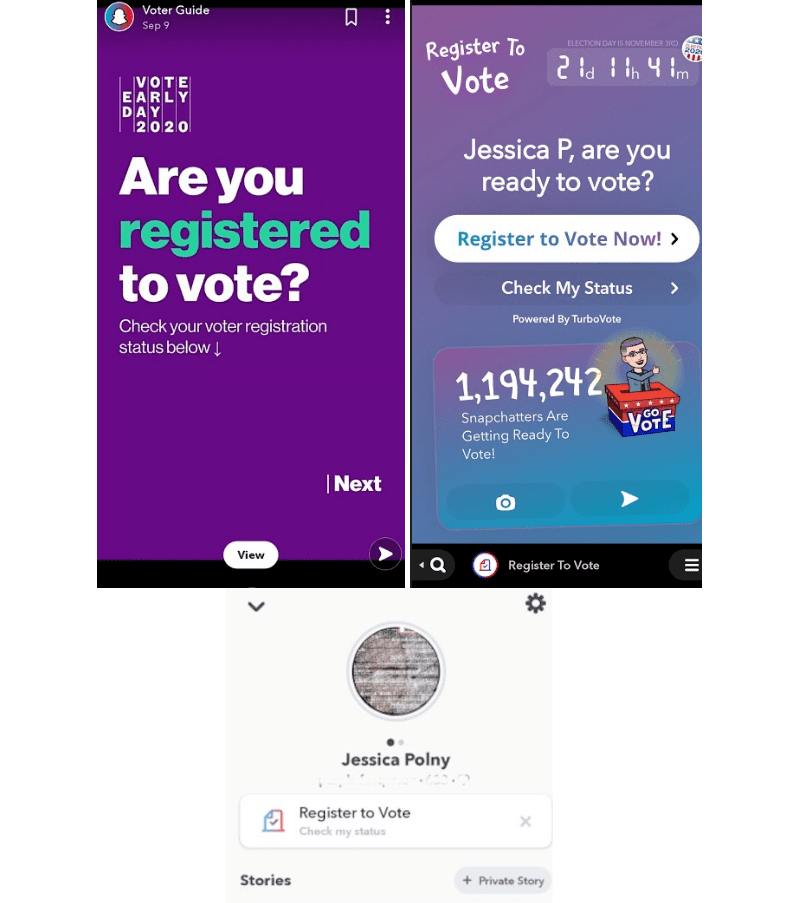

For the U.S. 2020 elections Snap Inc. attempted to promote civic engagement and provide authoritative information. As of September 2018, all user profiles would include resources for voter registration on Snapchat for all users 18 and older. Snapchat also promoted civic engagement on the app with filters and icons for user content. By encouraging users to vote, and providing resources for voters, Snapchat hoped to deter misinformation relating to civic processes in the United States.

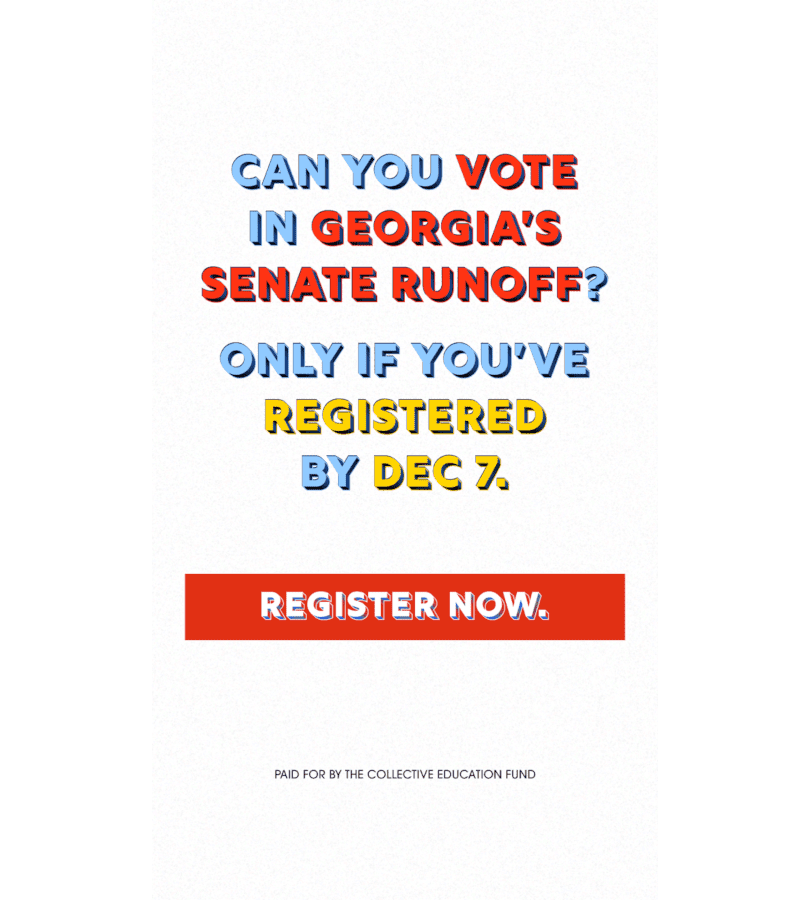

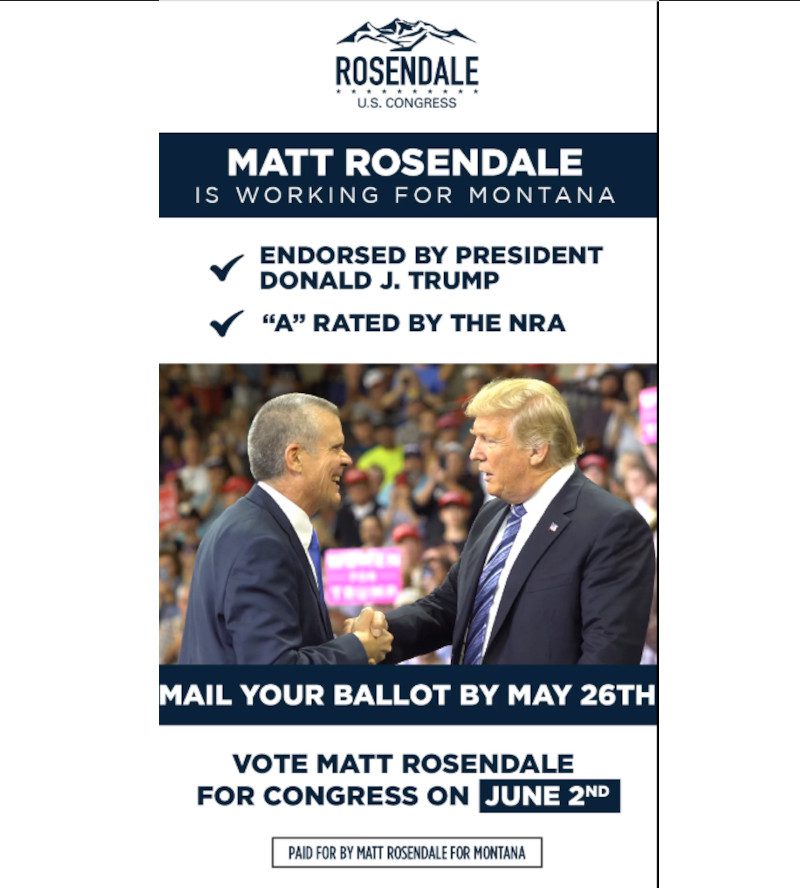

Snap Inc. provided an update to its advertising policies for political content in May 2019, in preparation of the upcoming presidential elections in the United States. Political advertisements were labeled with a “paid for by” disclosure, and Snap Inc. provided information on the political party or organization that funded the ad. According to these policies, Snap Inc. would also be internally reviewing all political ads, and may “require substantiation of an advertiser’s factual claims” in order to prevent misinformation and fake news on the platform.

In October of 2020, Forbes interviewed Sofia Gross, the Public Policy Manager for Snap Inc. She noted that the voting initiatives on Snapchat were curated to focus on three areas: voter registration, voter education, and voter participation. The platform has over 100 million users, and Gross stated that so far the app aided 425,000 users with voter registration, “and 57% of them showed up.” Gross also explained that authoritative content on the Discover feed was provided by civic and social justice organizations such as NAACP and ACLU.

Snapchat and COVID-19

Snap Inc. published an official response to COVID-19, “Safety First,” in the Snap Newsroom on March 24, 2020. The central approach to prevent COVID-19 misinformation on the platform was to provide users with authoritative information. For example, filters help promote safe social distancing and health practices, as recommended by the World Health Organization. This newsroom announcement also reiterated platform policies that prohibited the sharing of misinformation, or any content “that deceives or deliberately spreads false information that causes harm,” on public content moderated by Snap Inc.

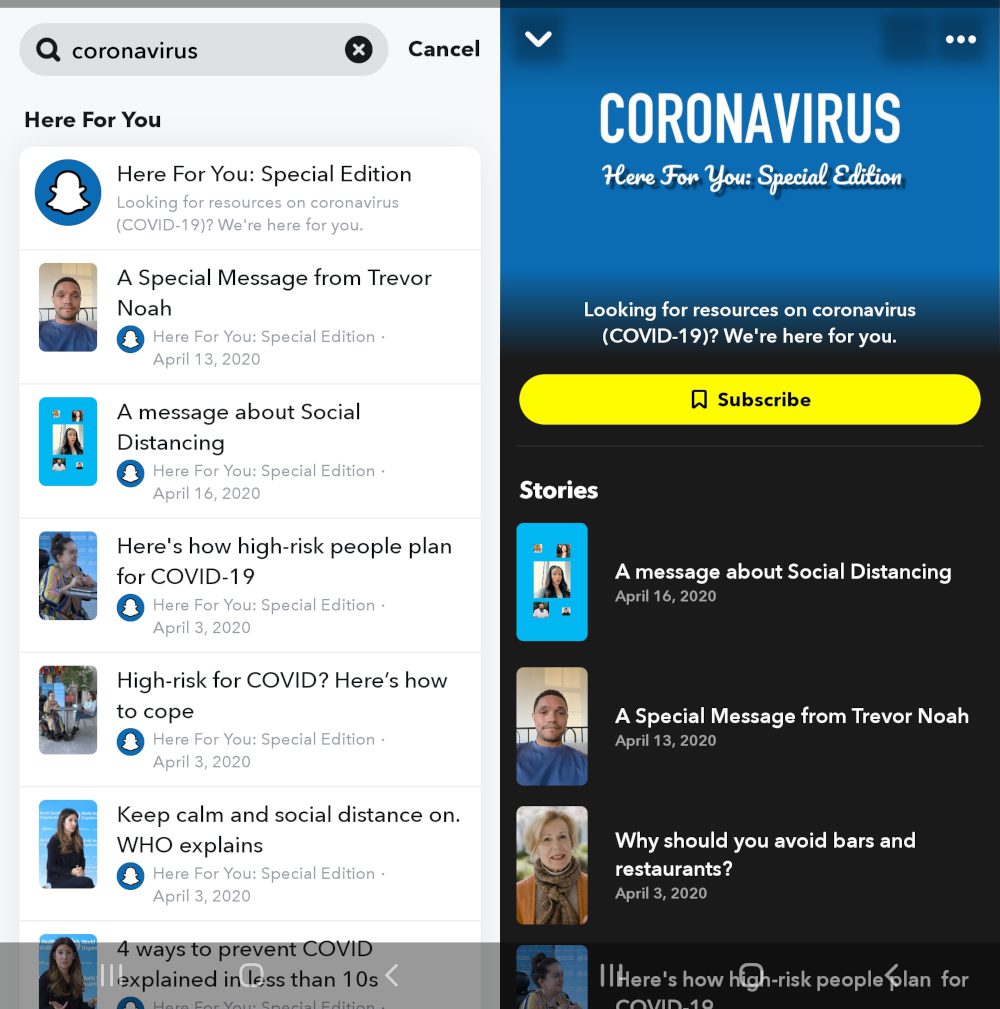

As part of their COVID-19 response, Snap Inc. launched a curated page for authoritative health information on Snapchat’s public-facing Discover feed, where users could view news media and specially curated content. On the Discover page, the COVID-19 “Here for You” feature was a collection of stories from both experts and platform-curated content. The goal of this page was to inform users on relevant subjects such as personal protective equipment, advice for staying at home, and facts on vaccination. When searching for related topics on Discover, Snapchat also promoted content from the “Here for You” page.

Snapchat Research Observations

Snapchat strongly emphasized the provision of reliable information for users over evaluating individual content. This is evident by the in-platform resources provided in response to real-world events, such as voter registration guides and COVID-19 information for health and safety. However, the overall implementation of content moderation in regard to labeling and misinformation was lacking in comparison to other platforms.

The platform’s partnership with news outlets and publications enabled Snapchat to moderate media partners they feature. However, Snap Inc. would likely not respond to a specific piece of content until it is reported by a user or a rebuttal is published by an independent fact-checker. In the case of former president Donald J. Trump on Snapchat, Snap Inc. chose to no longer promote his page on Discover, but did not remove any particular content. The platform eventually banned Trump from the platform altogether in January of 2021 for violating media partner guidelines. In response to COVID-19, the platform attempted to curate reliable information with public content on Discover, in collaboration with media partners and health organizations.

Snapchat provided a list of prohibited content in its community guidelines, as opposed to implementing labels and warnings on content. But criteria for content in the “false information” category was ambiguous, as Snap Inc. stated that misinformation must be malicious, deliberate, and cause harm. These factors of harmfulness are a limited consideration regarding misinformation evaluation on the platform, and therefore disregard exactly how true or authentic content must be to fall under moderated approval. Furthe, content violations required a user report, and there was limited proactive platform evaluation. Snapchat generally appeared to scrutinize the health authorities and news publications to be featured on their public facing Discover page, but the platform did not provide clear, publicly available criteria for those considerations.