Facebook and Instagram (Facebook Inc.)

The Evolution of Social Media Content Labeling: An Online Archive

As part of the Ethics of Content Labeling Project, we present a comprehensive overview of social media platform labeling strategies for moderating user-generated content. We examine how visual, textual, and user-interface elements have evolved as technology companies have attempted to inform users on misinformation, harmful content, and more.

Authors: Jessica Montgomery Polny, Graduate Researcher; Prof. John P. Wihbey, Ethics Institute/College of Arts, Media and Design

Last Updated: June 3, 2021

Facebook Inc. Overview

Facebook began as a social networking service website in 2004, founded by Mark Zuckerberg. The platform began as a simple post-and-comment forum between “friends” linked online, and it has now expanded to include likes, photos, videos, live streams, groups, pages, and advertising. Facebook Inc. now also has corporate ownership of multiple subsidiary social networking platforms, including Instagram and WhatsApp.

When it comes to content labeling and moderation, Facebook Inc. utilizes a metric of “harm” to fact-check and rate content containing misinformation, and implement the appropriate labels based on its criteria. When the Facebook platform first adopted fact-check labels in 2016, they were limited to text-based banners. Now, interstitials and click-through mechanisms on Facebook and Instagram provides a clearer indication of review and increased friction against user engagement with harmful misinformation.

Visible indicators of labeling have evolved steadily by design, while updates on policies regarding the implementation of labels for Facebook and Instagram have evolved as a reaction to real-world events. The misinformation shared around important events, most notably the 2020 U.S. Elections and COVID-19, were determined by Facebook Inc. to constitute a concerning level of harm.

The following analysis is based on open source information about the platform. The analysis only relates to the nature and form of the moderation, and we do not here render judgments on several key issues, such as: speed of labeling response (i.e., how many people see the content before it is labeled); the relative success or failure of automated detection and application (false negatives and false positives by learning algorithms); and actual efficacy with regard to users (change of user behavior and/or belief.)

Facebook Platform Content Labeling

Facebook platform examples have been captured primarily on desktop screenshots, due to the diversity and multiplicity of content on the platform

Facebook attempts to combat misinformation by removing and reducing the visibility of harmful content, as well as informing users with authoritative sources. A large part of the Facebook content assessment strategy relies on third-party fact checkers. These fact-checking organizations are all signatories of the International Fact Checking Network, a mandatory condition of collaborating with Facebook Inc. The platform’s community standards, under the section “Integrity & Authenticity,” includes the moderation of false news, as outlined by the community standards. Platform guidelines for misinformation moderation were presented in a foundational Newsroom announcement from 2019, “Remove, Reduce, and Inform“:

- Remove. In the general Community Standards, Facebook provides information on the content subject to removal – hate speech, violent and graphic content, adult nudity and sexual activity, sexual solicitation, and cruel and insensitive content.

- Reduce. Regarding false news, reduction includes (a) “Disrupting economic incentives for people, Pages and domains that propagate misinformation,” (b) “Using various signals, including feedback from our community, to inform a machine learning model that predicts which stories may be false,” (c) “Reducing the distribution of content rated as false by independent third-party fact-checkers.”

- Inform. “Empowering people to decide for themselves what to read, trust and share by informing them with more context and promoting news literacy.” Strategies on informing, including the context button, page transparency, and context warning labels are included in these tables.

The evolution of the labeling strategy is inflected by certain key moves and company announcements. In December of 2016, Facebook published a Newsroom announcement, “Addressing Hoaxes and Fake News,” stating that content flagged for misinformation would be labeled as “Disputed by 3rd Party Fact-checkers” in a banner below the content. However, there was no interstitial over the link image, and there was no evaluation of the veracity of the content provided. This first version is a stagnant label, and it does not provide much friction when scrolling through the Facebook feed.

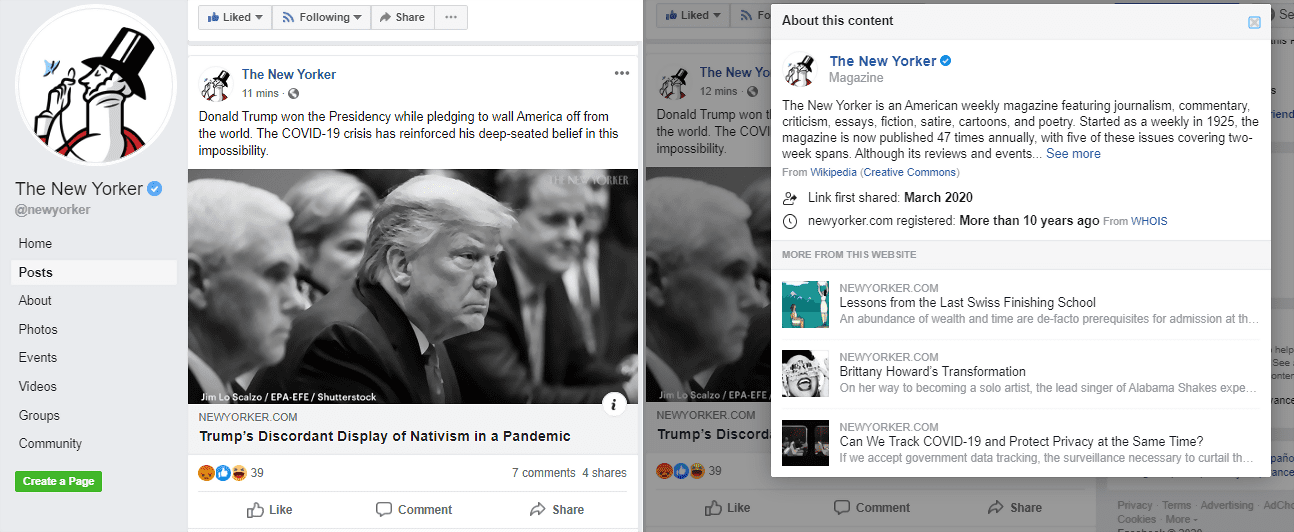

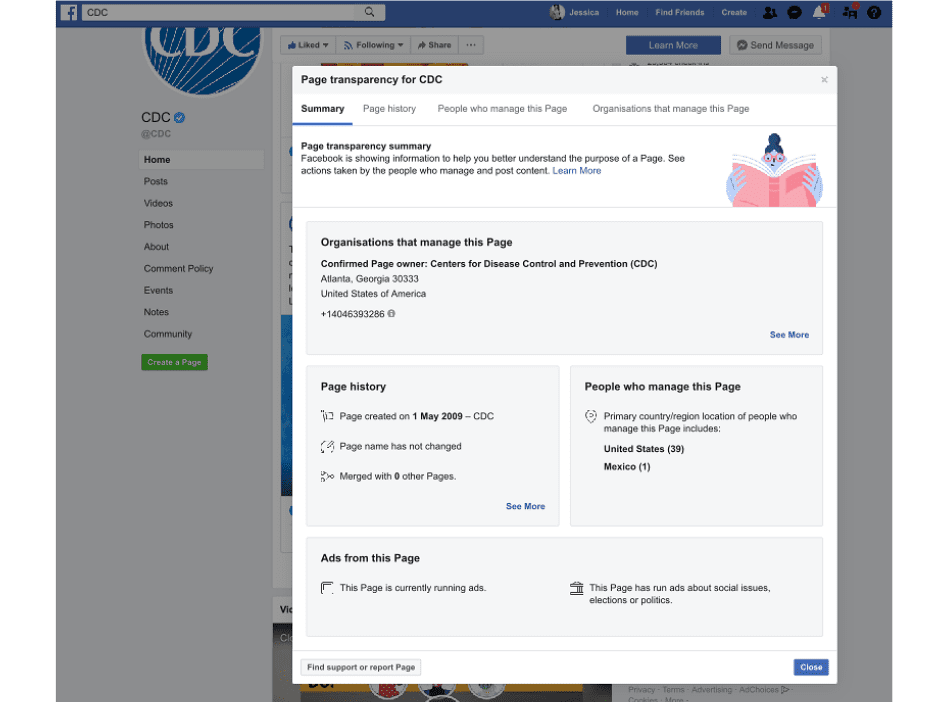

The evolution of Facebook platform labels and policies focuses on the metric of harm, to protect the safety and integrity of its users. These strategies adjust in response to content with a high likelihood of causing harm, such as medical misinformation and false news that interrupts the democratic process. In efforts to increase information transparency on the platform, Facebook launched publisher context labels in a Newsroom announcement in the spring of 2018. The in-platform context includes an abundance of verified information on the publishers, which are accessible after users click through the link.

Facebook Platform and the 2020 U.S. Elections

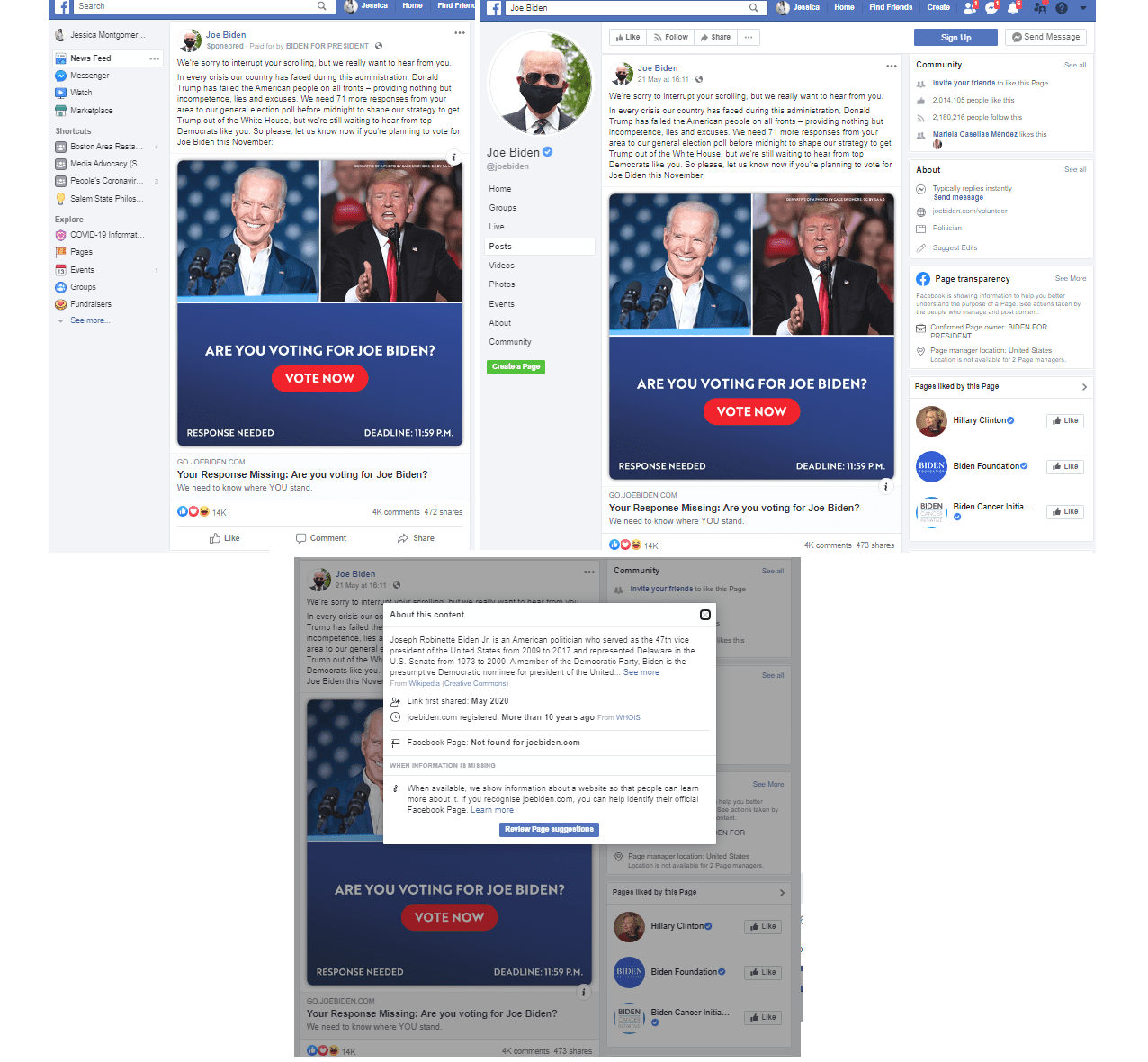

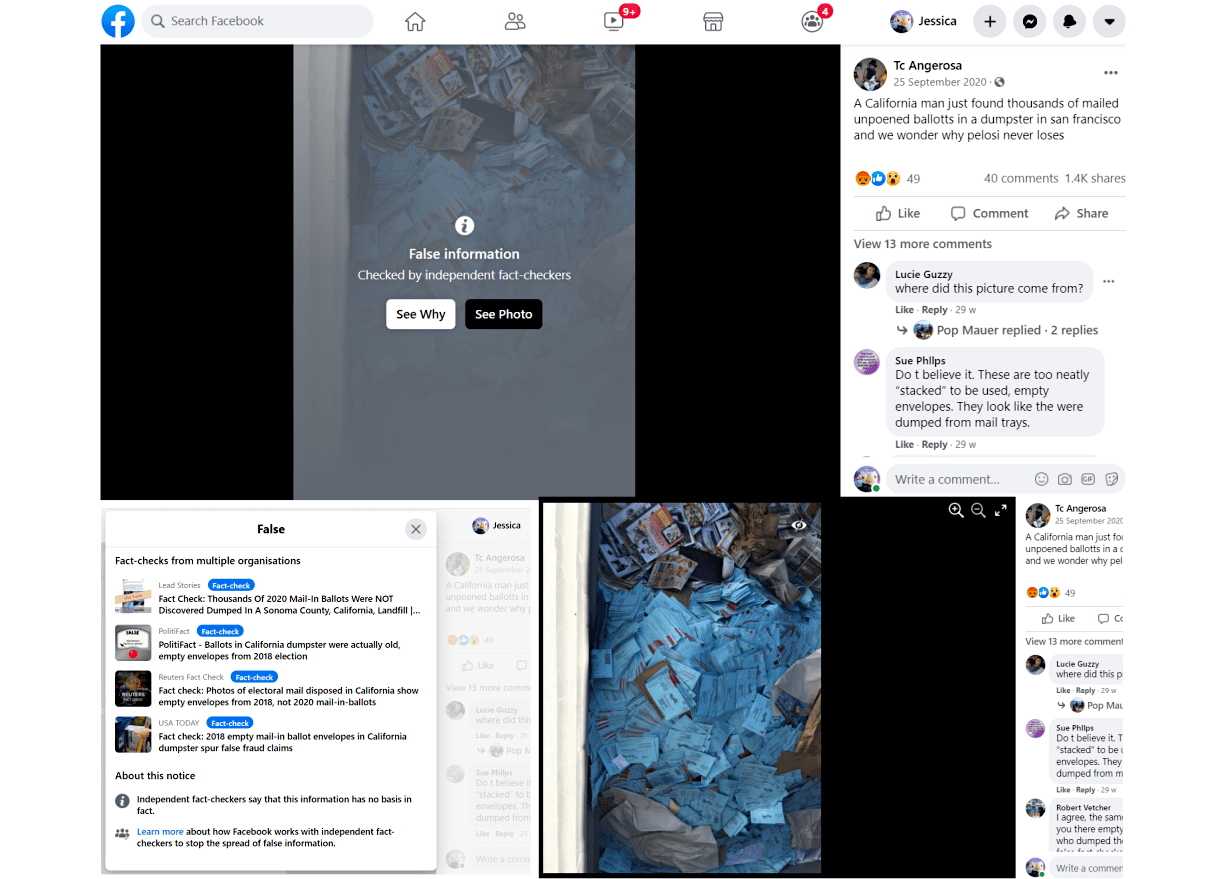

Increased action for transparency on public pages and third-party linked publishers soon expanded to advertisement transparency in April of 2018, in particular for political ads on Facebook. Advertisements had grey subtext beneath the post publisher title that the advertisement is “paid for by” an individual or organization, which also provides information on sponsor verification and a third-party link to further information on political candidates or social issues. This notice also acts as a click-through button to view the verified sponsor’s page and view Facebook advertising policies. The verified page on the candidate or sponsoring organization must also be available for public view. These pages will have available “Page Transparency,” with information on page metrics, such as when the page was created and page moderators.

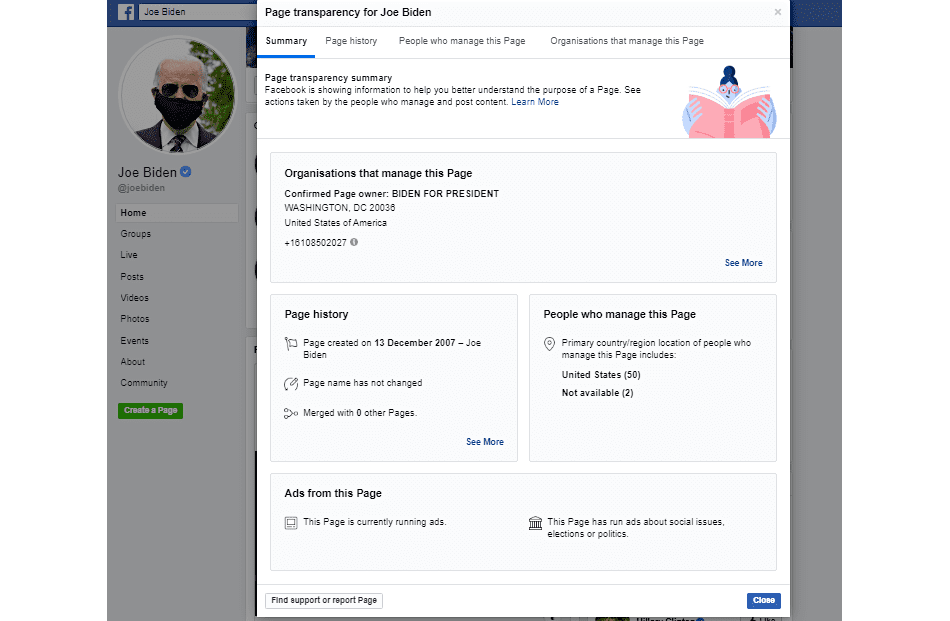

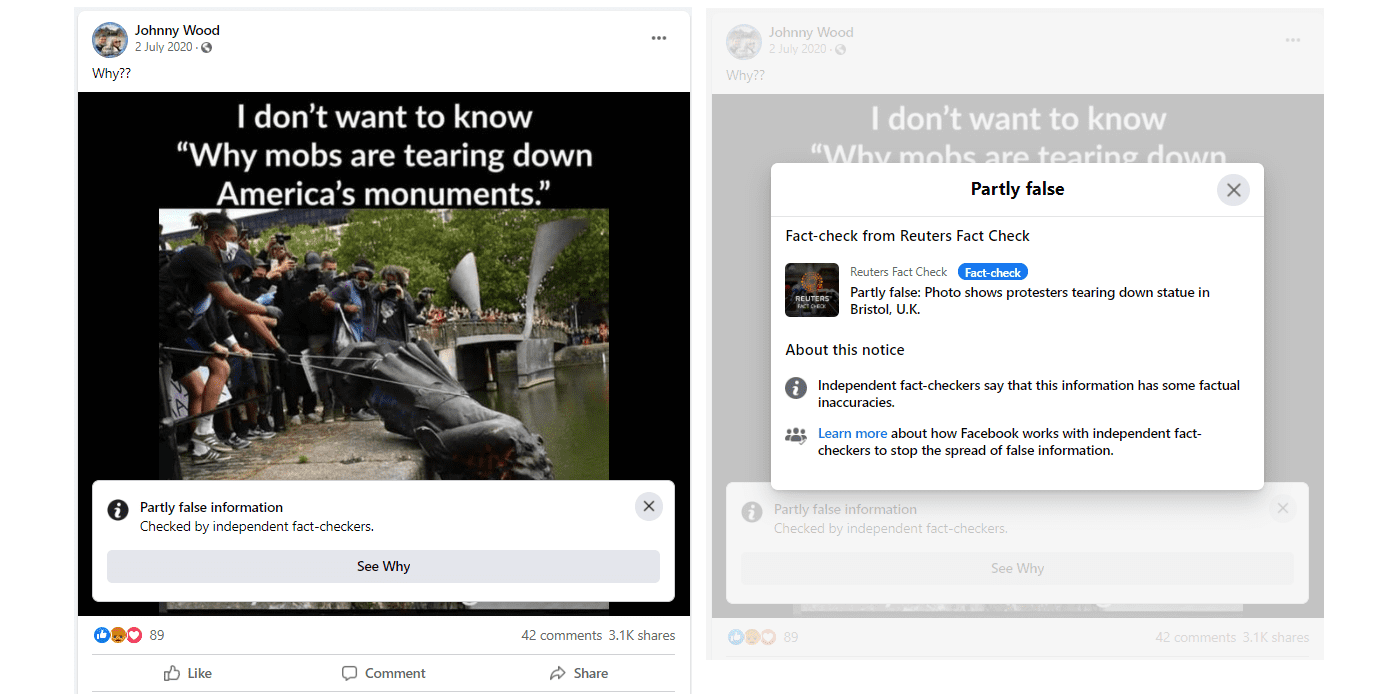

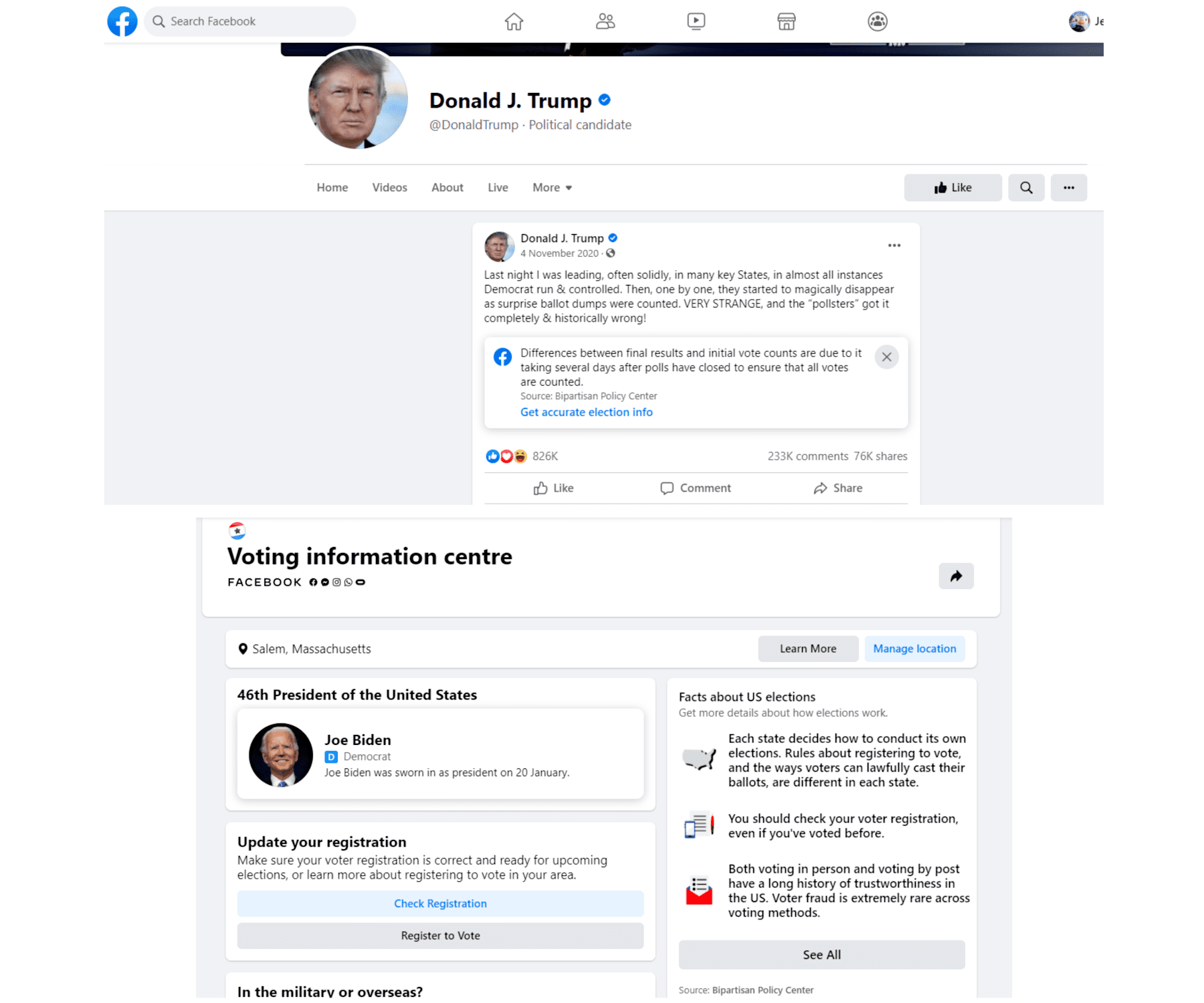

In October of 2019, the majority of labeling and content moderation updates went into effect, as Facebook Newsroom announced strategies for misinformation and harmful content regarding political topics, “Helping to Protect the 2020 US Elections.” Third-party fact-checks began having a range of ratings, most prominently “false information” and “partly false information.” In this updated version, misinformation perceived as harmful is hidden behind a blurred and grey interstitial, to prevent users from viewing the content before acknowledging the warning for misinformation. When users opt to click through and “see” the content, the interstitial and warning labels disappear entirely, only to be reinstated if the page is refreshed or the link is opened in a different tab.

Link: https://www.facebook.com/johnny.wood.169/posts/10157696275499195

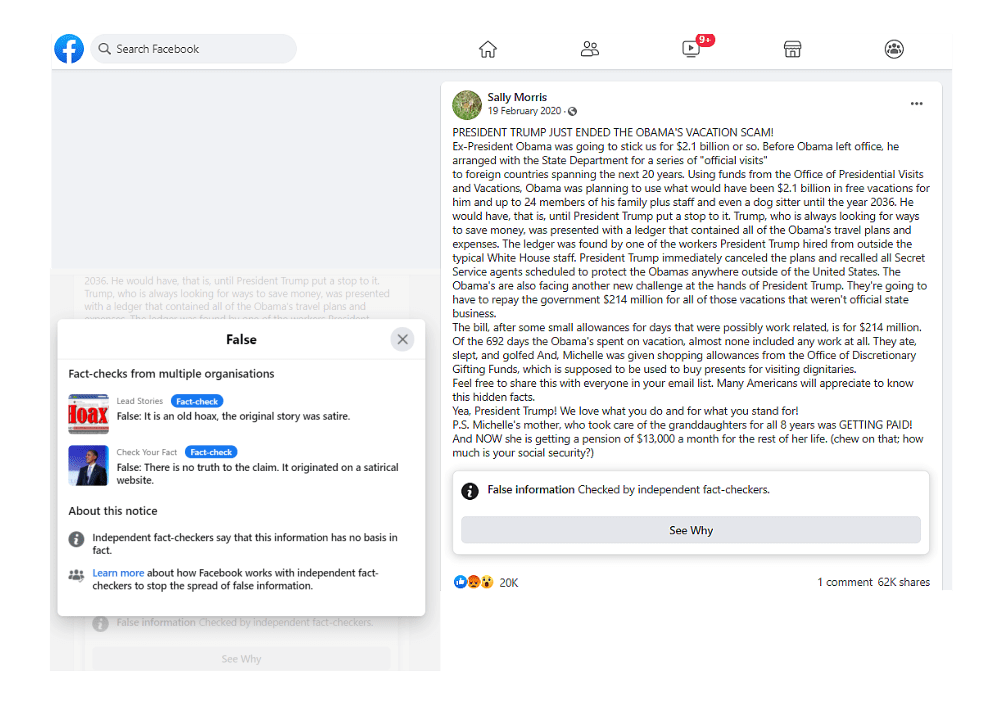

Throughout 2020, fact-check content labels on Facebook began to appear on text-only user posts. Beforehand, content warning labels only appeared as interstitials over images, video, and visual elements for third-party links. Since the the text-only labels appear as banners at the bottom of posts, this may provide less friction than content with interstitials. This is an important turning point in Facebook’s moderation approach.

Link: https://www.facebook.com/sally.morris.313/posts/2719497178146273

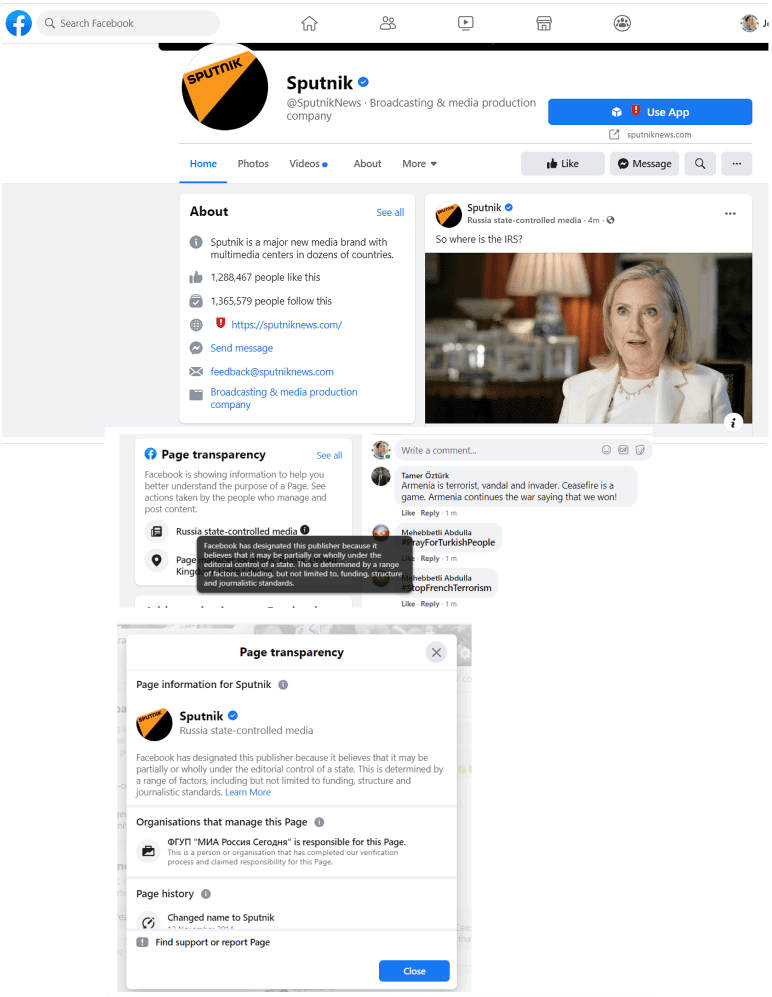

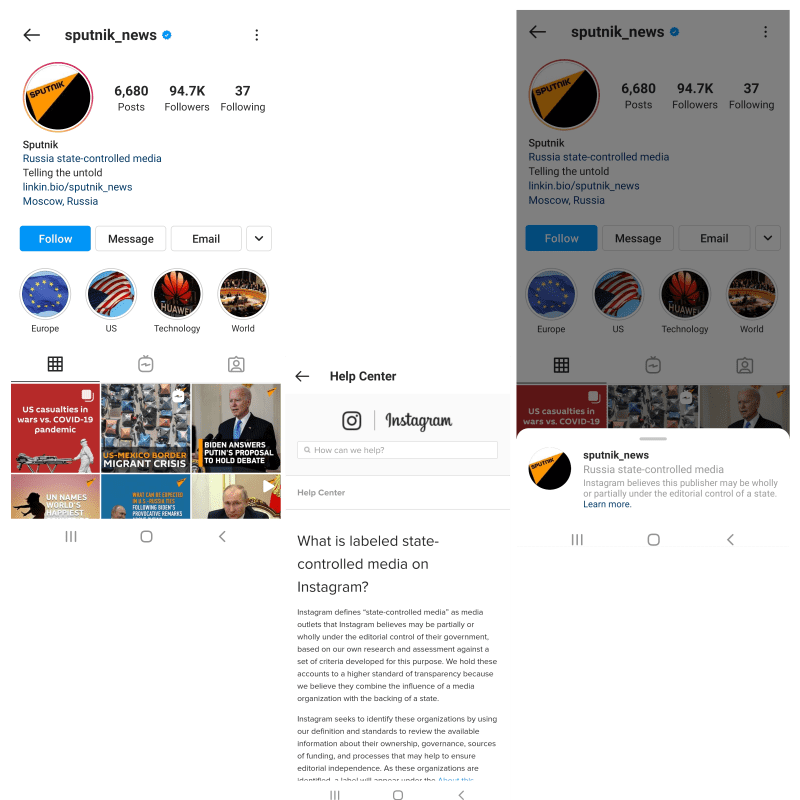

The increase in content transparency outlined in the announcement “Helping to Protect the 2020 US Elections” also includes the labeling of “state-controlled media.” This label is visible in grey subtext under the publisher title for individual content, and on their Facebook pages when scrolling down to view the “page transparency” section. The label for state-controlled media is more apparent on individual content, as opposed to relying on users to scroll down to page transparency in the page view.

Link: https://www.facebook.com/SputnikNews/?ref=page_internal

Content that is “manipulated” is differentiated in Facebook standards from misinformation. The platform made an announcement in January 2020 that the platform would remove manipulated media rather than label it. When following a Facebook link to removed content, a full-page message appears with a link to Facebook content policies. Along with manipulated content, the focus on removal strategies was more heavily implemented with the growing impact of viral topics in the United States, such as COVID-19 and the U.S. 2020 elections, to prevent the spread of misinformation perceived as harmful.

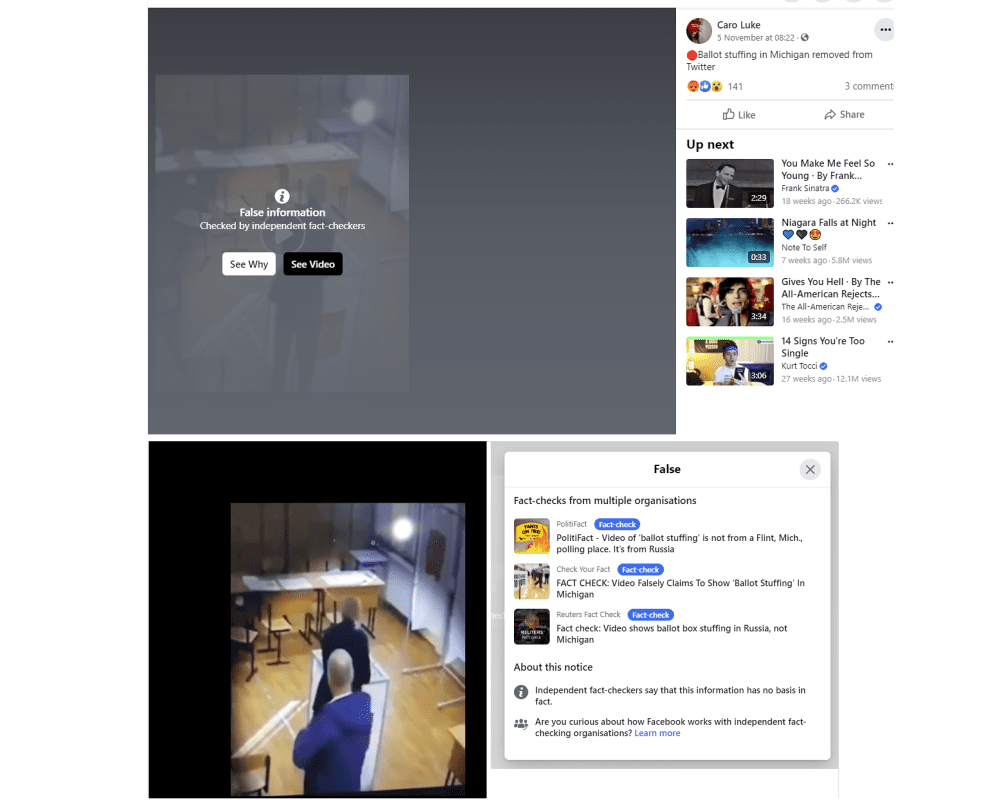

As the 2020 presidential elections drew nearer, Facebook expanded its content moderation policies regarding political content and misinformation. Particular criteria for political misinformation were further clarified regarding third party fact-check ratings, such as misinformation on voting participation and fraudulent claims on the election process. In the Facebook Newsroom announcement “New Steps to Protect the US Elections,” in September of 2020, two new types of political content labels were implemented. First, the company began labeling content that may inhibit civic engagement, such as “fraud” or “delegitimizing voting methods,” with an interstitial content warning. Second, Facebook rolled out a label for “falsely declared victories,” displayed as a banner below posts with click-through information on election data, as opposed to an interstitial.

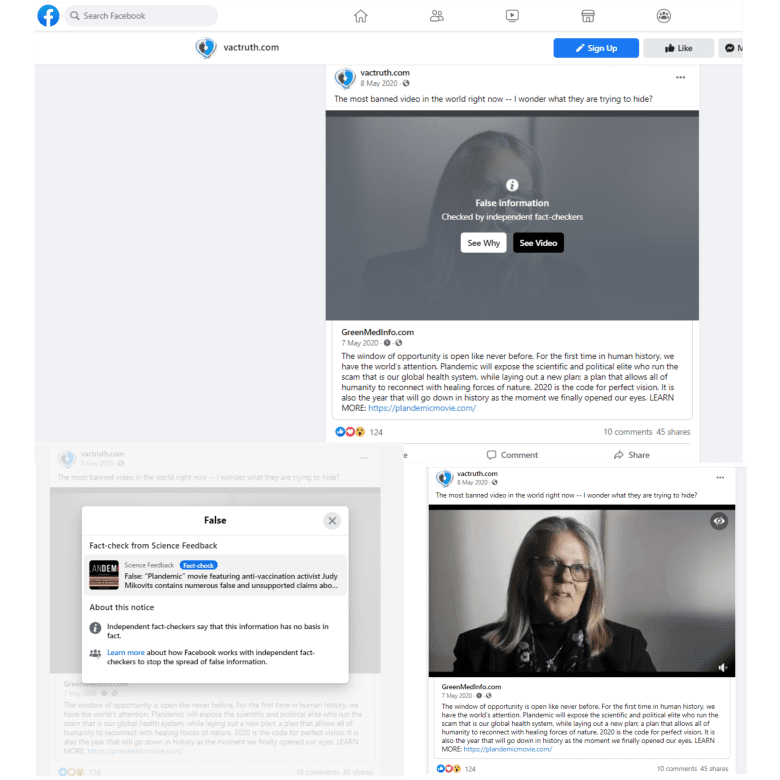

Facebook Platform COVID-19 Response

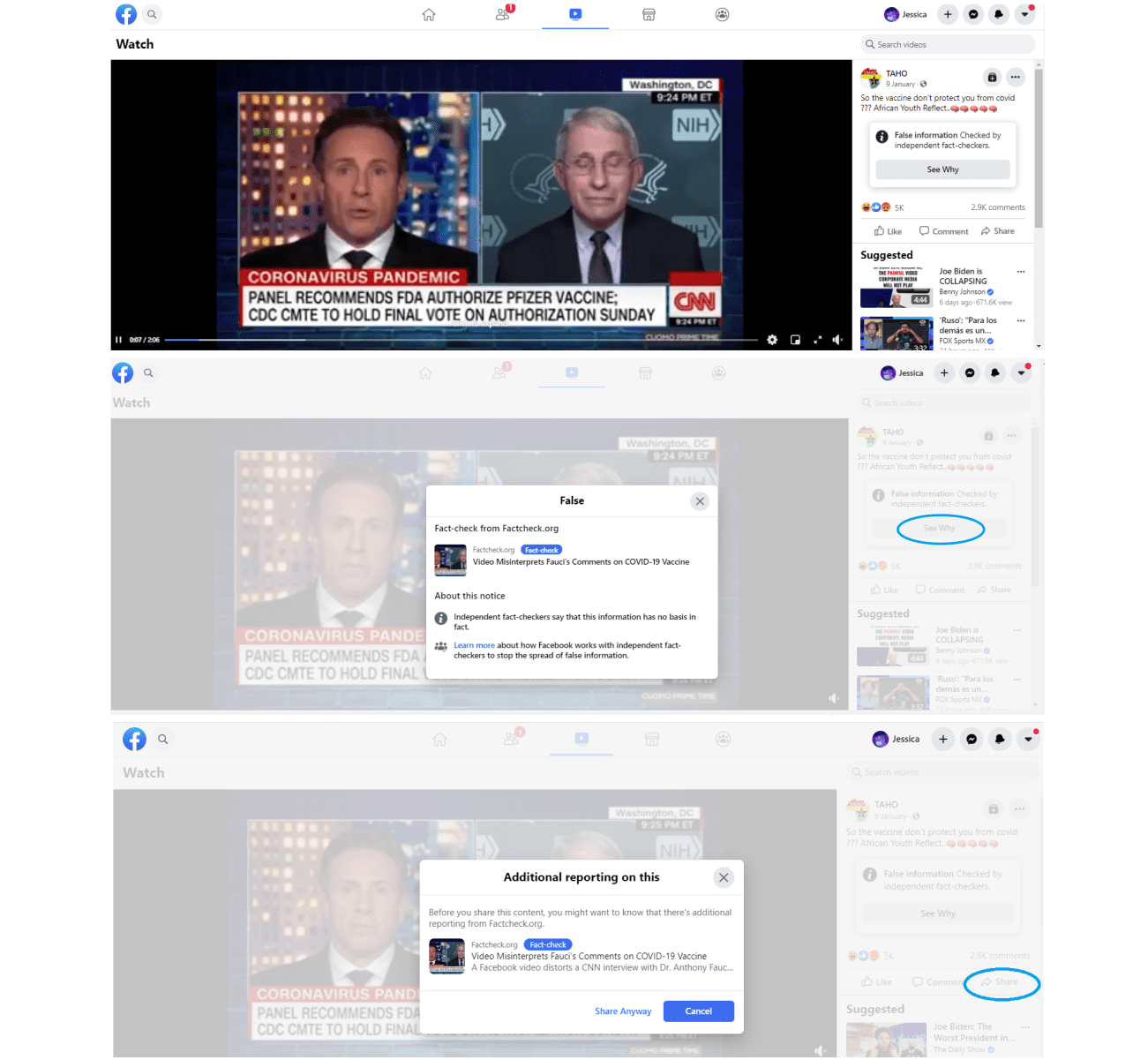

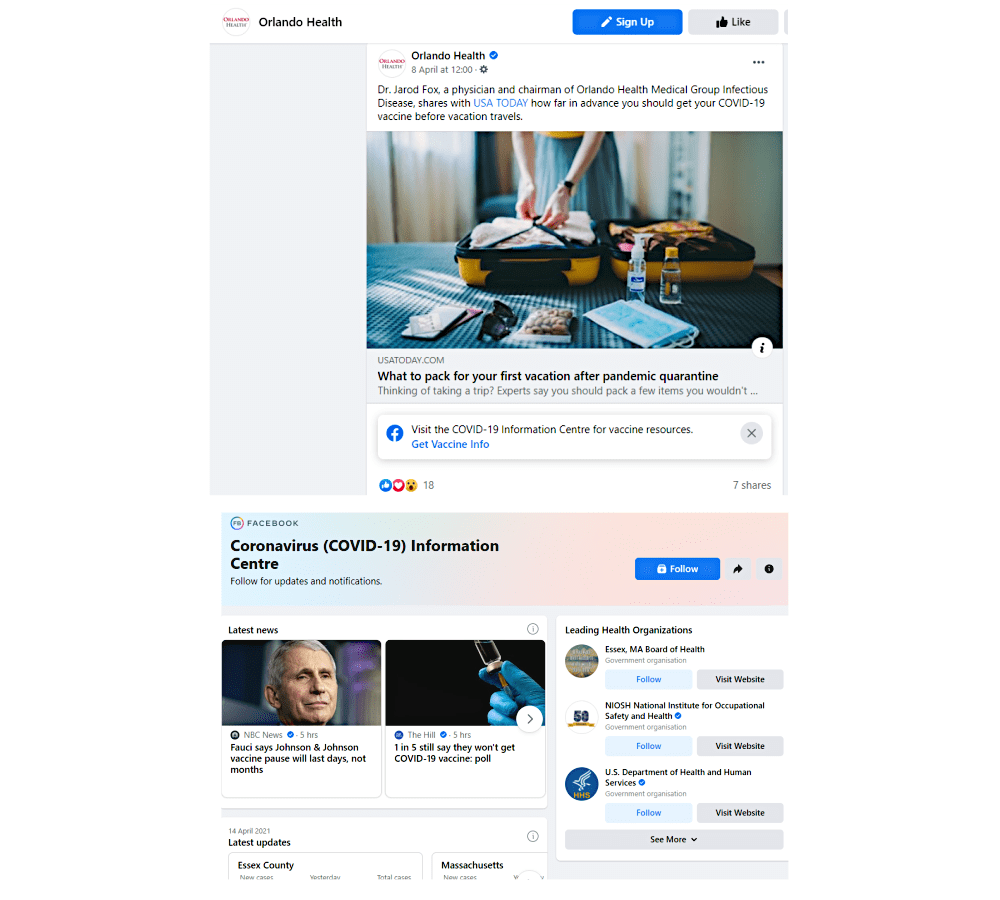

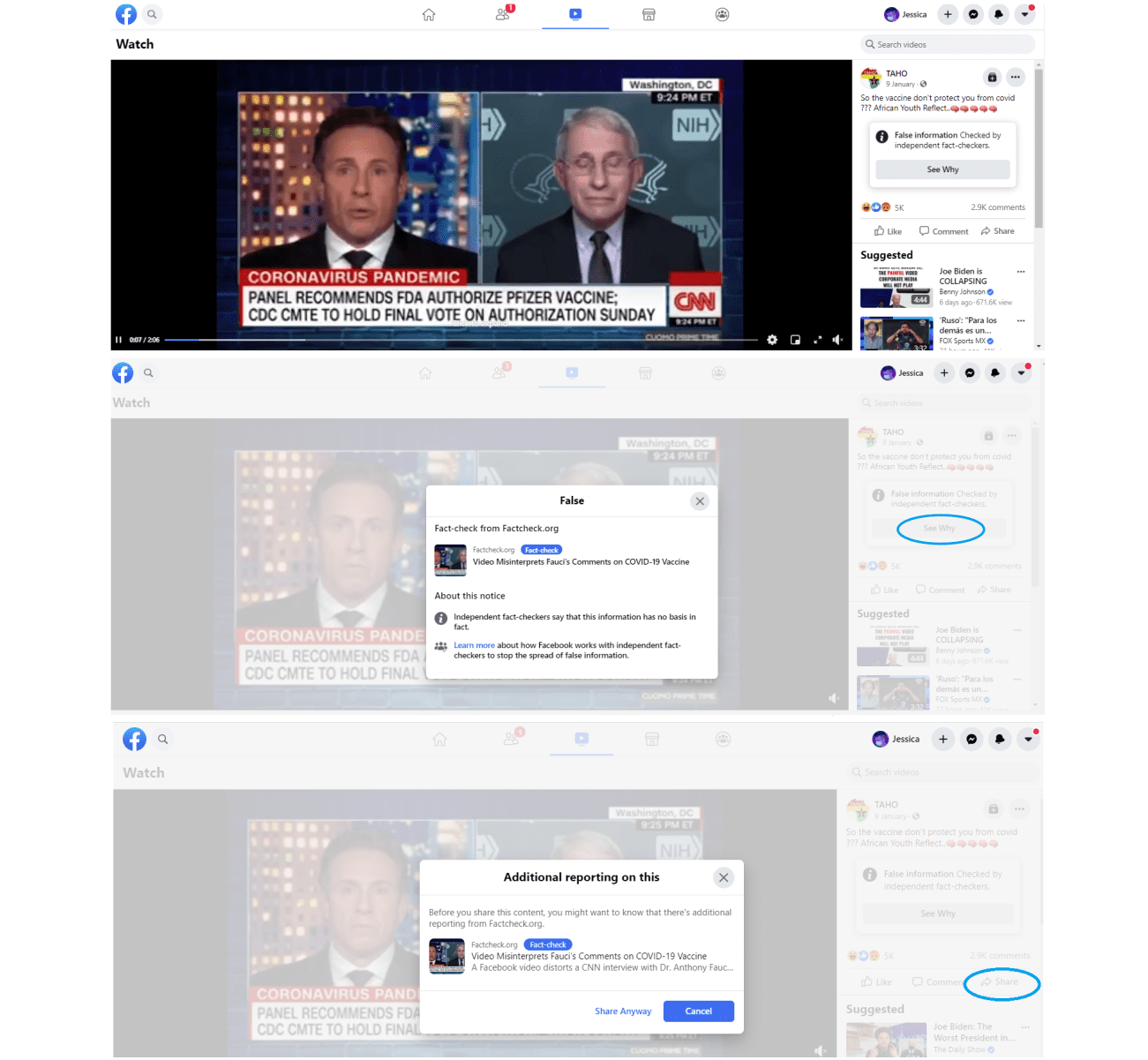

On March 25, 2020, Facebook announced its content moderation approach in response to COVID-19, including the ongoing application of third-party fact-check labels. The visual presentation of content warning labels did not change based on COVID-19 content. Harmful COVID-19 misinformation is placed behind interstitials, with the fact check rating on top, and click-through options for verified information. Applying established Facebook policy regarding misinformation to COVID-19 content was an attempt to provide consistency and transparency to content labeling, including the fact-check ratings, for users encountering labels on the Facebook feed.

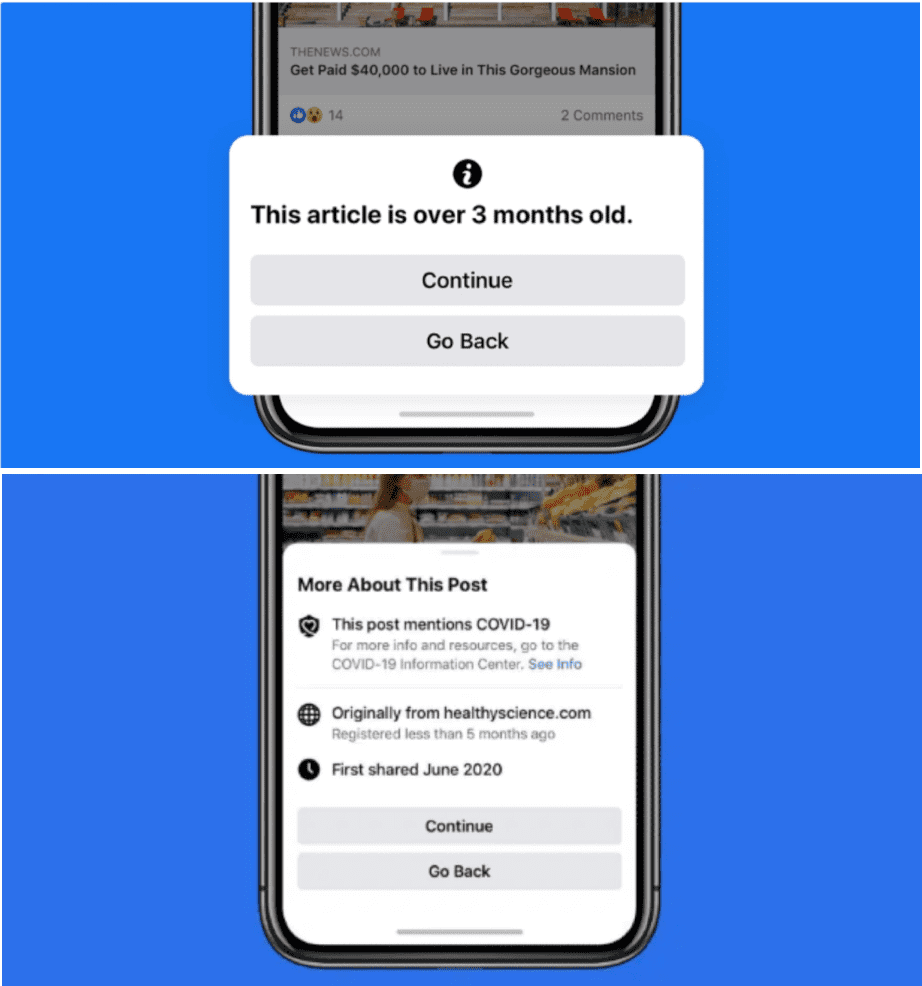

Facebook attempted to increase friction to misinformation content in response to COVID-19 in a variety of areas of its business. For instance, the Facebook chat system “Messenger” adds labels for forwarded content, and also places a forwarding limit in the application. In April 2020, Facebook also included a pop-up notification for anyone who shared a piece of information that had been labeled with a content warning. Facebook encouraged users to “Cancel” their interaction with a bright blue button on this pop-up notice, but users are still allowed to continue to “Share Anyway” with the button option in muted grey. The April 2020 Newsroom announcement “An Update on Our Work to Keep People Informed and Limit Misinformation About COVID-19” also explains how these pop-up notices become more broadly used to provide contextual information on article links, in which a notice appears to warn the user that the article they wish to share is outdated. Users also retain access to view the publisher context information, implemented earlier on.

In March 2021, Facebook shared new platform strategies to encourage users to get the COVID-19 vaccine by labeling posts that reference vaccination. Content that mentions COVID-19 vaccination procedure, vaccination site information, or even users celebrating their vaccination, will appear with a banner label below the content, which provides click-through access to authoritative information on vaccines from the World Health Organization. Any harmful vaccination content remains subject to third party fact-check and removal guidelines for COVID-19 misinformation.

Other Expanding Labels on the Facebook Platform

While new labels are added, and some functions are retained, Facebook frequently updates its user interface, changing the visual impact of labels and authoritative information. For instance, in the 2020 interface update – known as FB5, or the “New Facebook” – the “Page Transparency” page became more compact and brighter, with bolder text. There are also more statistics and information readily available in a page’s “About” sidebar section, such as membership and related links.

Link: https://www.facebook.com/CDC/

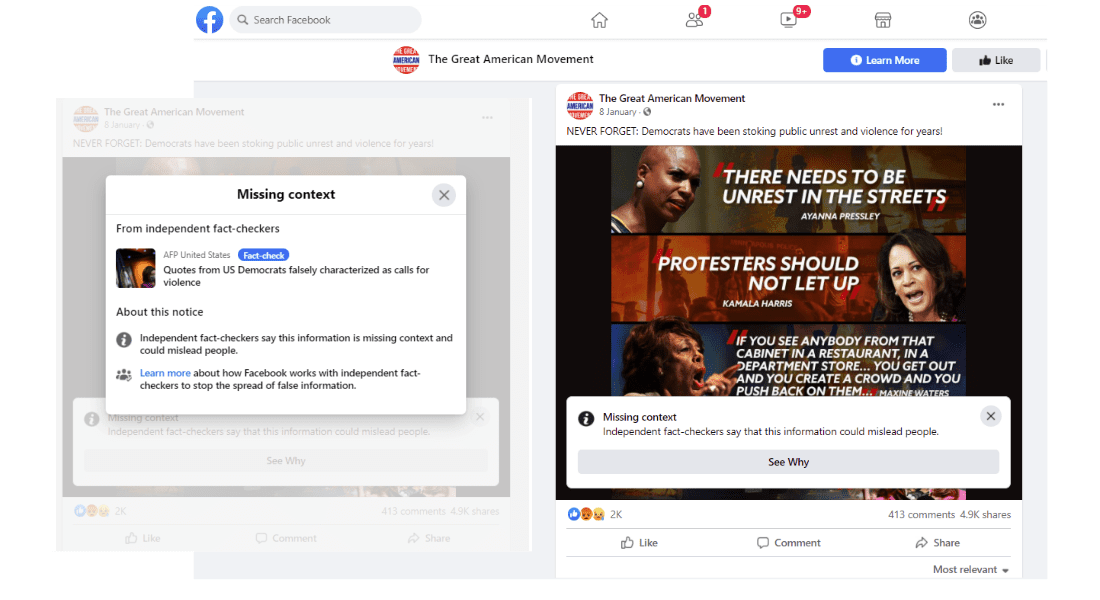

While manipulated media was previously removed from the platform all together, in August of 2020 Facebook announced new fact-check ratings for altered media and content that is missing context. “Altered” photos and videos are displayed behind an interstitial, with the rating placed on top. However, “Missing context” labels are displayed as a banner below the post, providing potentially less friction.

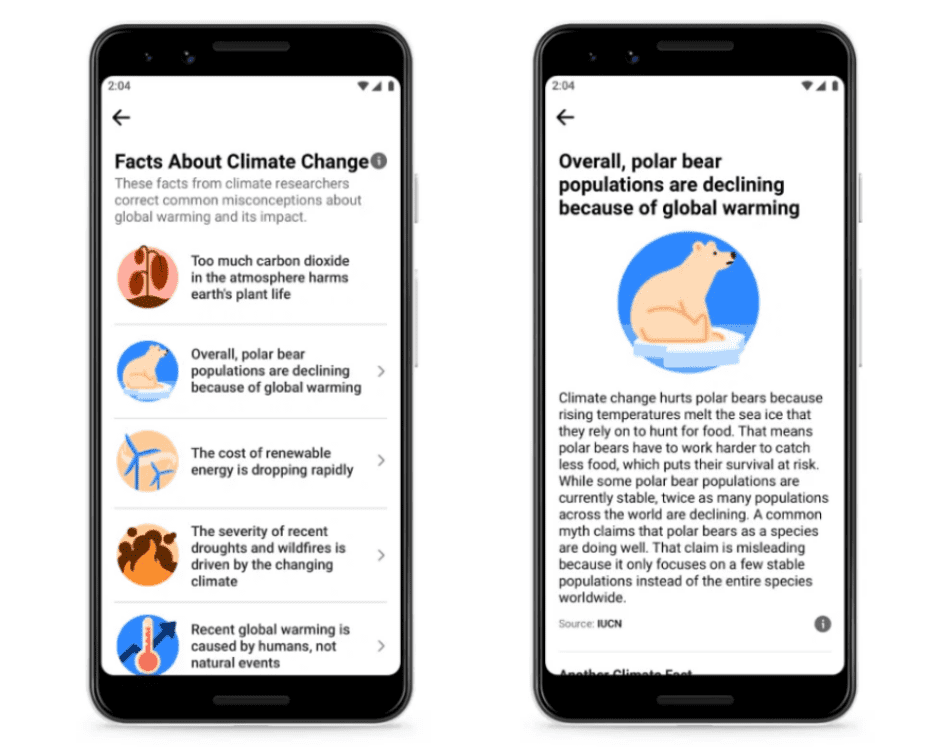

At the start of 2021, new labels on climate change-related information began to be applied, which direct users to Facebook’s Climate Change Information Center. Facebook’s goal is not to only moderate misinformation, but to connect users with credible climate change information, as stated in the Newsroom announcement.

Groups and Pages on the Facebook Platform

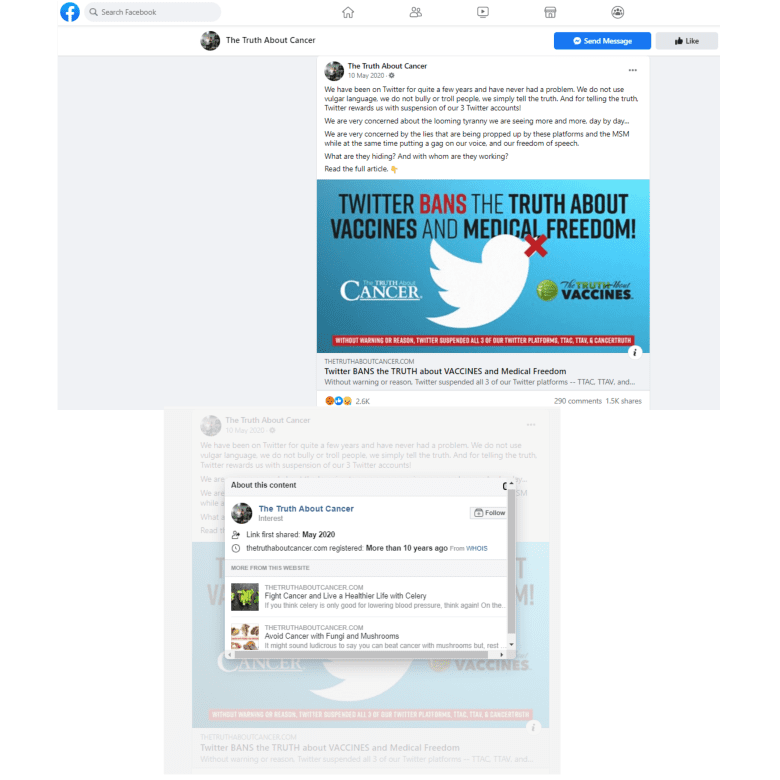

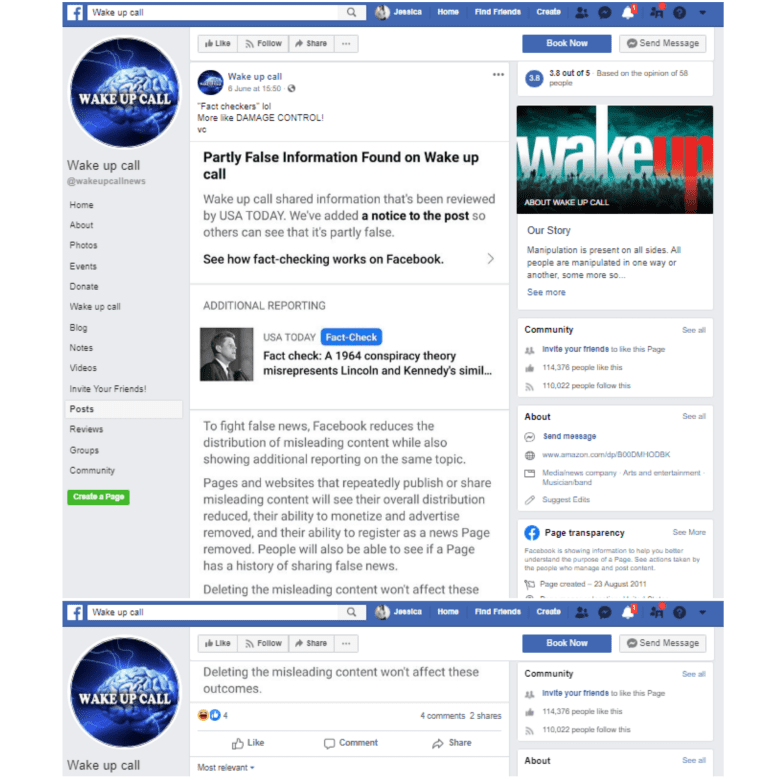

With the increased volume of misinformation and hoaxes amid the presidential elections and global pandemic, content moderation policies in Facebook Groups evolved. Traditionally, Facebook regarded Groups as “private” spaces, with lessened content review. But in September 2020, Facebook announced new group moderation strategies, in “Our Latest Steps to Keep Facebook Groups Safe.” Page and group content would now be subject to fact check content warning labels, as well as increasing transparency with “publisher context” on Groups that provided information such as date of formation and number of members.

Link: https://www.facebook.com/thetruthaboutcancer/posts/3002554243171198

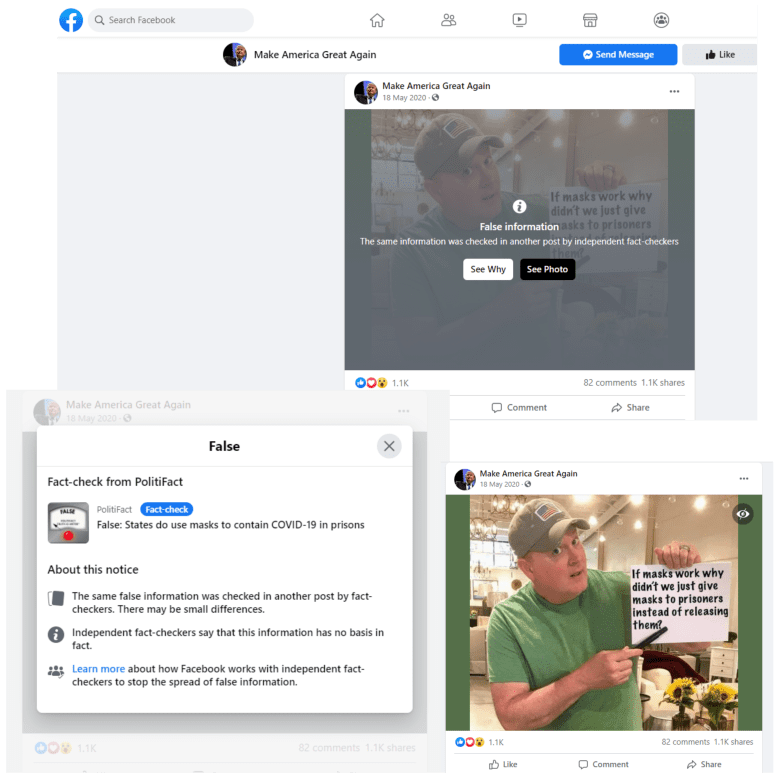

The increased Group moderation was in response to the growing political tensions with the context of the 2020 U.S. Election and related social issues. The Community Standards Enforcement Report that Facebook published in August 2020 included an abundance of policy changes regarding hate speech, and moderation against hate and terrorist groups. NewsGuard, a journalistic fact-checking organization, had observed a number of particularly dangerous groups on Facebook spreading COVID-19 misinformation. Content labels in groups appear in the same format as content in an open feed, although the implementation appears to be less consistent.

Link: https://www.facebook.com/DJTMAGA/posts/2930576500324941 …

Groups on Facebook are regarded as viral hubs of misinformation, in addition to hate speech, harassment, and organizing in harmful ways. While some content is moderated, there is also still an abundance of images and links with harmful misinformation that get viewed by thousands of Group members.

Instagram Overview

Developers Kevin Systrom and Mike Krieger created Instagram in 2010 as a photo and video sharing platform, but it was soon acquired by Facebook Inc. in 2012. Users can interact with posts on the feed by liking them, commenting on them, or bookmarking them to an archive, as well as exploring the featured “hashtag” system. Instagram also became one of the first platforms to heavily feature ephemeral 24-hour stories on user accounts. Facebook Inc. has considered Instagram as a compatible social media platform with Facebook, and the two platforms share many policies and moderation implementations. Users can link and share content between these two platforms relatively easily, with settings to link their accounts.

Instagram Content Labeling

Instagram examples are captured with mobile screenshots, since most of the warnings and click-through interactivity for content labeling is only available on the mobile application.

Since Instagram merged with Facebook, many of their content strategies and guidelines are shared, including Instagram’s policies on misinformation and third-party fact checkers. The Instagram Community Guidelines are presented more broadly than Facebook.

- Removal is applied to content for violations such as (a) “nudity,” (b) “self-harm,” (c) “support or praise terrorism, organized crime, or hate groups,” (d) offering “sexual services,” “firearms”, or otherwise exchanging illicit or controlled material illegally, and (e) “content that contains credible threats or hate speech.”

- Reducing content entails “filtering it from Explore and Hashtags, and reducing its visibility in Feed and Stories.”

- Informing users is reinforced on Instagram, as with Facebook, using the content warnings.

Instagram’s initial outlook on content moderation focused on enabling transparency for user profiles. This focus is outlined in the company update from August 2018, which provides users with tools to authenticate Instagram pages. “About This Account,” which can be accessed by clicking the midline ellipsis when viewing a profile page, informs users regarding when a page was created, advertising that has been published through the page, and other information on the page’s public online details.

Instagram and the U.S. 2020 Elections

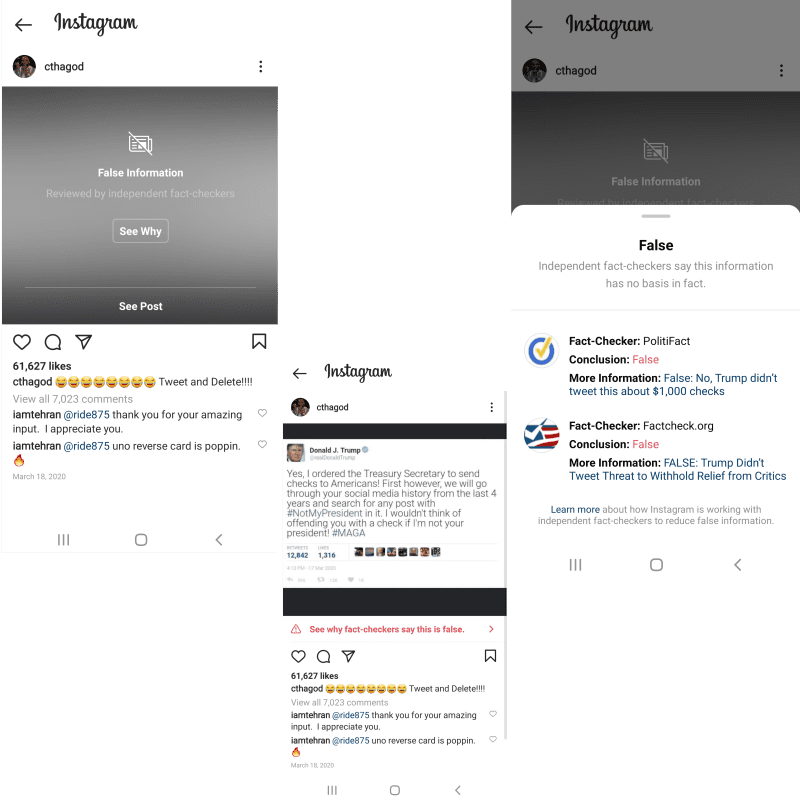

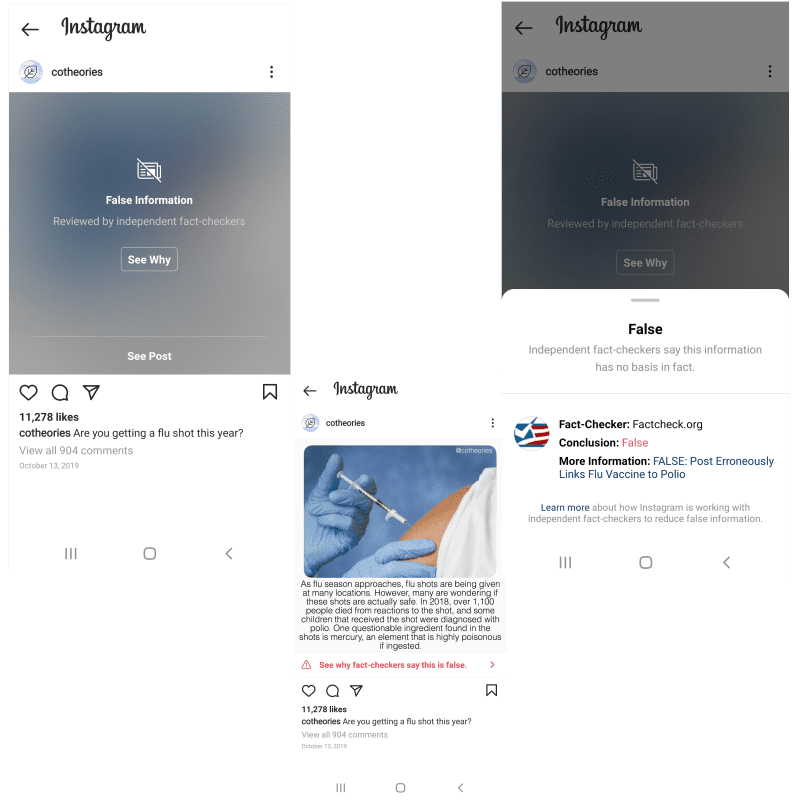

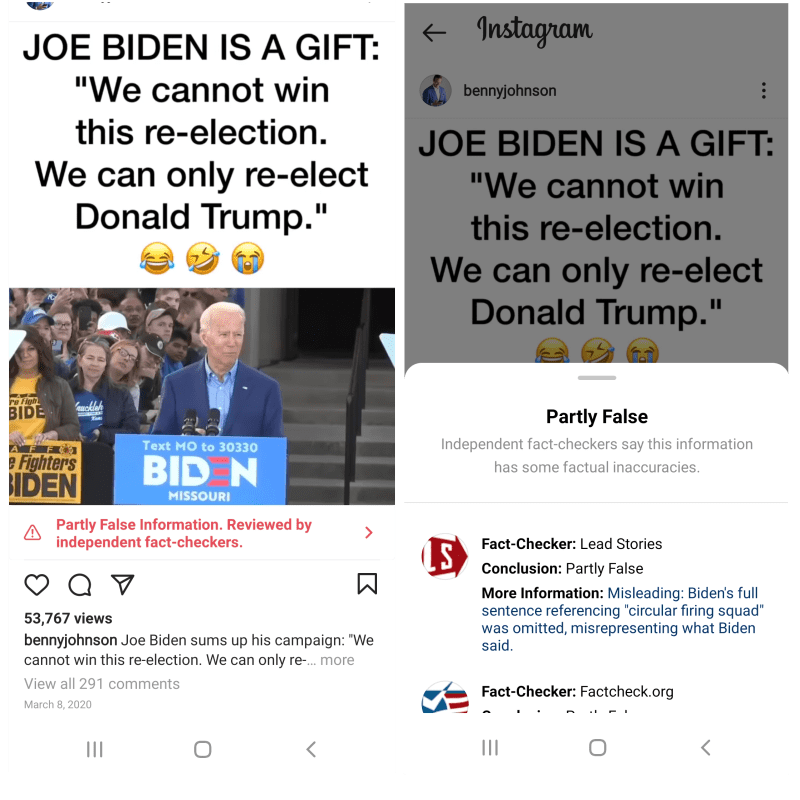

Instagram began implementing content warning labels and fact check ratings in October 2019. These updates were a direct reaction to upholding content integrity for the 2020 U.S. Election on both Facebook and Instagram. Until this point, Facebook Inc., which began content labeling and fact check review three years prior on the Facebook platform, had not implemented their policies or procedures in content moderation to the Instagram platform.

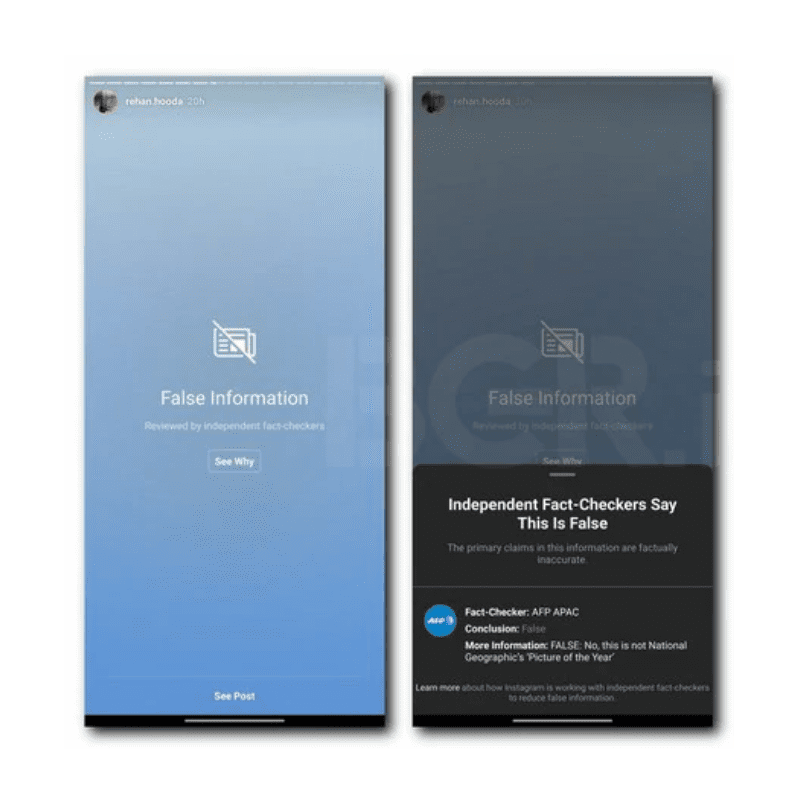

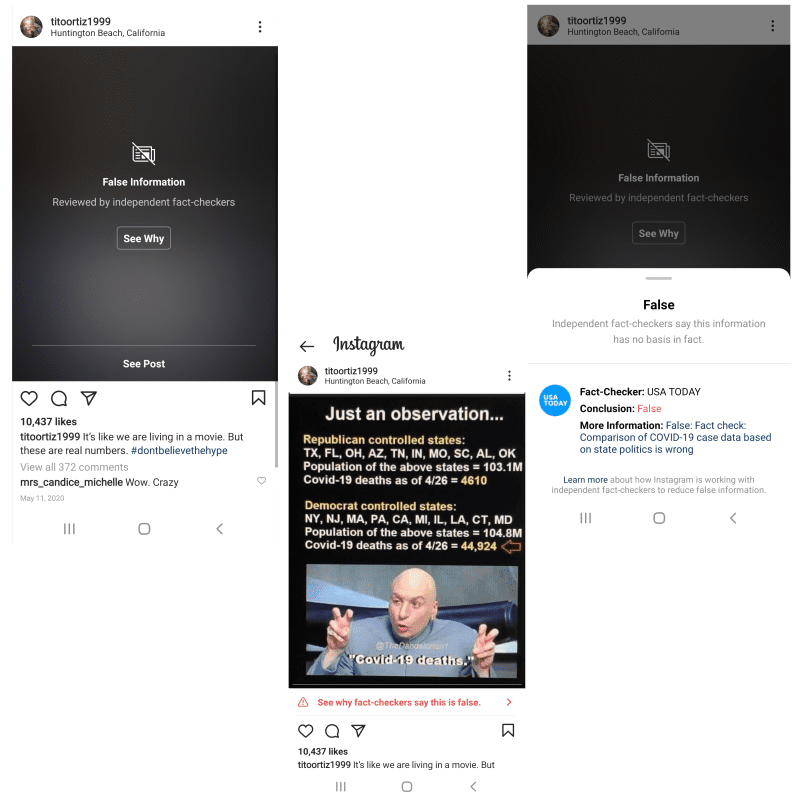

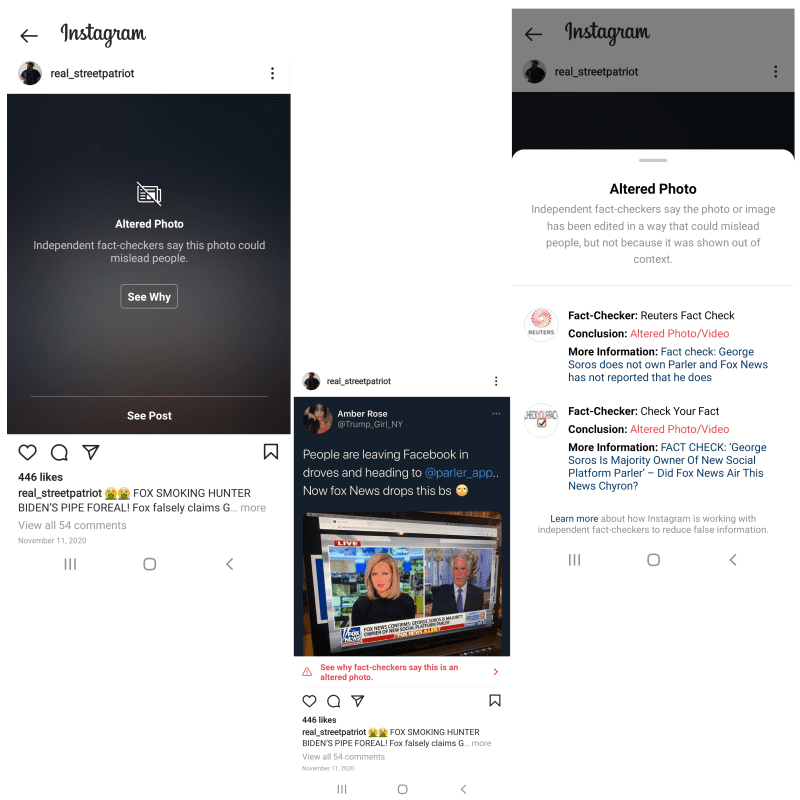

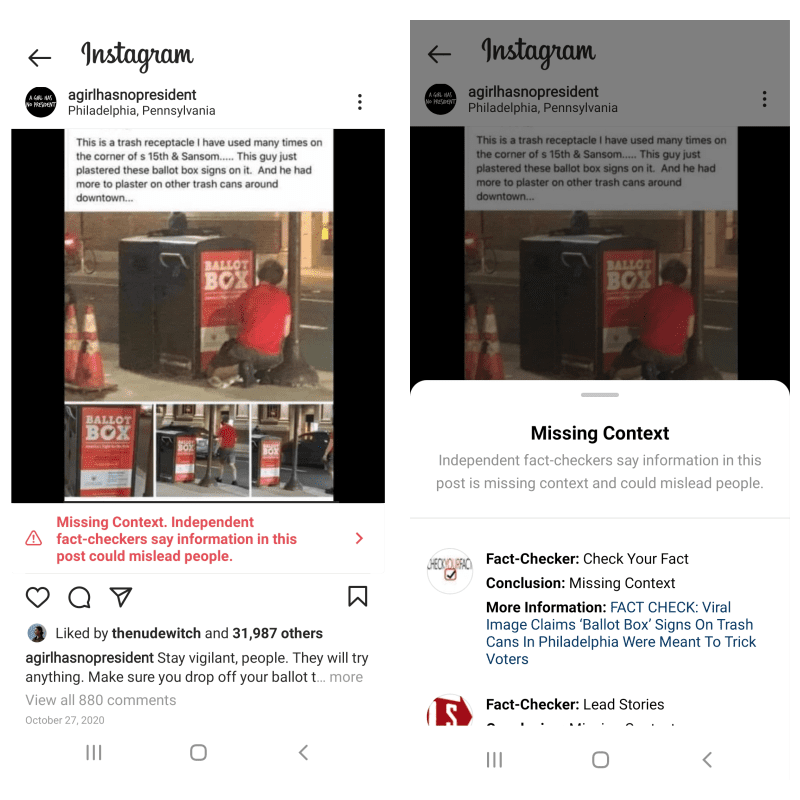

Fact check ratings on Instagram follow the same evaluation criteria on Facebook, and any Instagram posts with a content warning label have the rating in front of a blurred grey interstitial over the post. Users can click through to “See Why” for rating information and verified articles. When the user opts to view the content, the content warning remains in view as a banner below the content in bright red text. This is different than viewing labeled content on Facebook platform, which does not retain a warning when users opt-in to view content. The additional warning in this step provides more friction for Instagram content.

In addition to content posted on profiles and onto the feed, Instagram also reviews content on users’ ephemeral stories. As with feed content, the platform can implement content warning labels on stories, where the full frame of the story is hidden behind a blurred interstitial, and the rating is placed in front with “See Why” and “See Post” click-through options.

Along with these updates in response election misinformation, Instagram expands transparency alongside Facebook by adding a “state controlled media” label to relevant media profile pages, presented as blue click-through text underneath the profile page name.

In December of 2019, Instagram updated misinformation moderation with fact checking and labeling content, and clarified policies on content beyond the elections and political topics. Given that Instagram is functionally a subsidiary, all facets of Instagram fact-checking refer to Facebook Inc., such as the ratings for “False information” and “Partly False information.”

Link: https://www.instagram.com/p/B9ev9CHlaM_/?utm_source=ig_embed

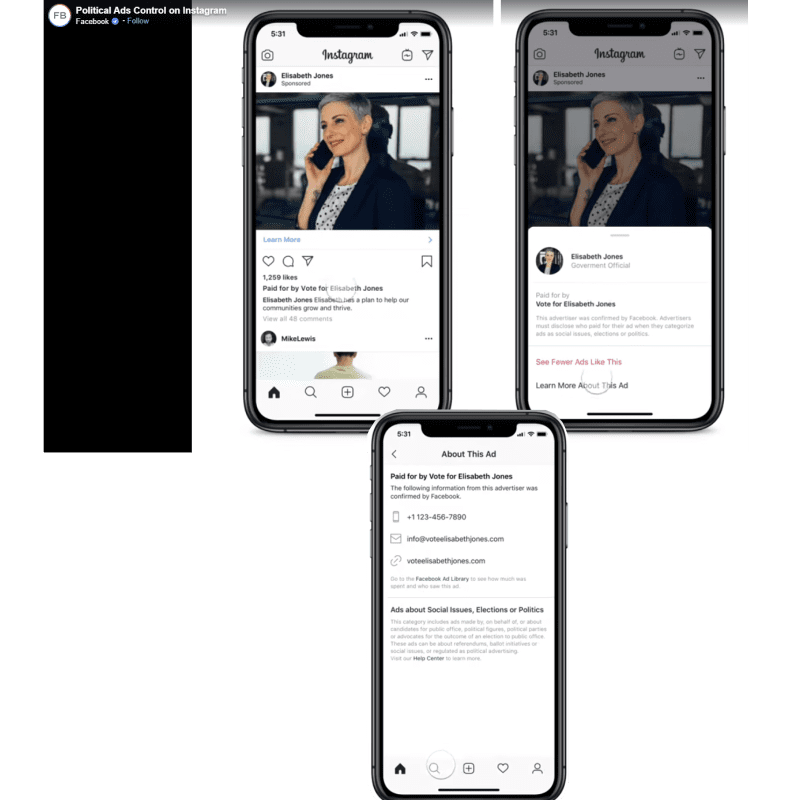

Drawing closer to the U.S. presidential elections, in June 2020 Instagram updated its advertising polities, in a Facebook Inc. Newsroom announcement, “Launching The Largest Voting Information Effort in US History.” This includes a “Paid for By” disclaimer for sponsored content, and improving interface for data comparison in the Facebook Inc. Ad Library.

Instagram and COVID-19

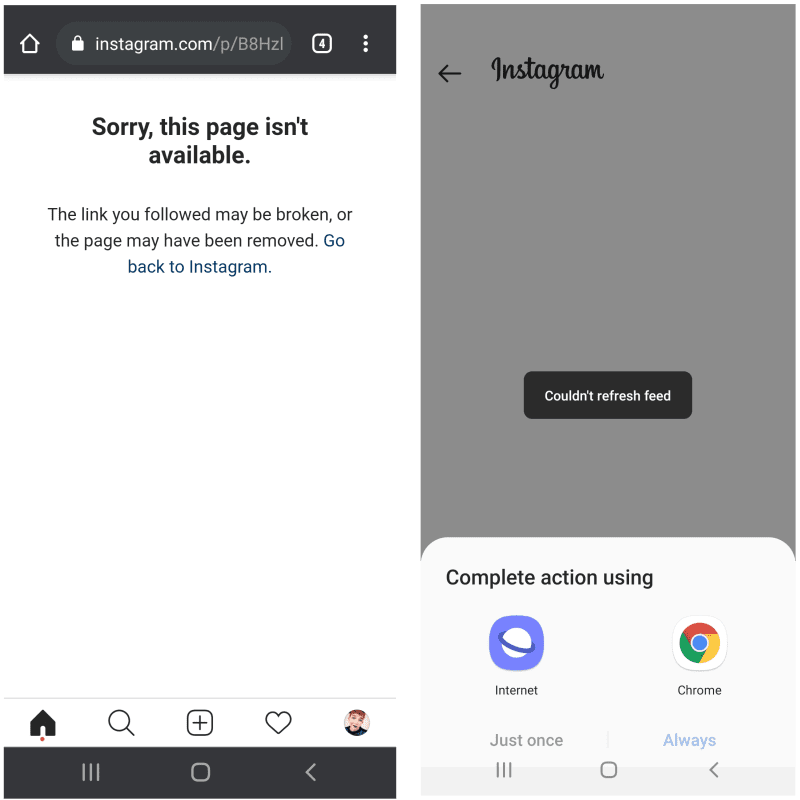

On the 24th of March, 2020, Instagram released its announcement regarding COVID-19 content and safety. Under the metric of harm, content is removed with a full-page message displaying, “Sorry, this page isn’t available,” and a link to return to the Instagram home page.

Unlike Facebook removal pages, there is no direct link provided for further information on platform policies. COVID-19 content is subject to the same implementation as other misinformation and fact check review, with rated content hidden behind a blurred interstitial. All COVID-19 content policy on Instagram refers to Facebook. However, Instagram is more intent on downranking and removing content from Explore and hashtag networks, as compared to Facebook content reduction policies. Their responsibility for guidelines and fact-checking on conspiracies and misinformation also lies primarily with the World Health Organization.

Other Expanding Labels on Instagram

Instagram also adopts the new “Altered” and “Missing Context” labels in August of 2020. “Altered” content is hidden behind a blurred interstitial with a rating on top, and click through options to “See Why” and “See Post.” On Instagram, the “Missing Context” label appears as a banner below the content, with bright red text and click-through options for verified contextual information.

Facebook and Instagram Research Observations

Visual design for Facebook and Instagram have an internal consistency that is clear and recognizable by users across platforms, which is especially important as the two continue to merge together, to some degree, with regard user interface and functionality. This includes the font type and blurred interstitials when it comes to warning labels, as well as keeping the label taxonomy analogous, such as “altered” content, and the “false” and “partly false” fact-check ratings.

These platforms approach moderation, based on the metric of “harm” to its users, by increasing friction, embedded into the content itself. Whether an interstitial evaluation or an arrow icon, Facebook Inc. implements visual indicators for misinformation and harmful content to its users. The requirement of click-through actions on Facebook and Instagram especially — while it may deter some users from seeking out the authoritative information — is at least one more action to prevent users from interacting with harmful content. That, at least, appears to be the theory.

It is especially important to note the real-world events that prompted change in policy and application of content labels across Facebook Inc. platforms. Instagram picked up more moderation policies in the 2020 timeline, in synchronization with metrics of harm and viral misinformation on the Facebook platform. Overall, Facebook and Instagram both have similar functional features as social media platforms — stories, content feed, profiles, live casting — but there are key differences in utility and information sharing that should call for more defined areas of content review and evaluation.

A few examples of differences would be that Facebook can have content shared within private Groups; that Instagram is more heavily reliant on hashtag use; and, while both platforms can post video and image content, Facebook also has text-only content to review. The most apparent labeling design choice on Instagram is that, even when a user clicks-through to view fact check rated content, the content warning label remains visible in the red text banner below the post. This reinforces the validity of the rating, and increases friction against a user sharing the content.

What is lacking in Facebook Inc. policy considerations is the distinction between Instagram and Facebook platforms regarding content type and user behavior. As Facebook Inc. applies the same content labeling methods to both platforms, the appearance of their content labels also indicates a lack of transparency in how Facebook Inc. conducts evaluations and fact-checking content with appropriate contextual considerations. By using the same visual designs, users are not provided with enough information to understand how the spread of misinformation and interaction with harmful content may be different on each platform.

The visual designs are a clear and concise implementation of labeling, fact-checking, and content moderation across Facebook Inc., that incorporates multiple actions within content before users interact with the content, making it more likely that they view corrected information. However, Facebook Inc. needs to present more transparency on how it approaches the contextual implications of the platforms themselves, beyond the isolated piece of content being moderated.