Reddit Inc.

The Evolution of Social Media Content Labeling: An Online Archive

As part of the Ethics of Content Labeling Project, we present a comprehensive overview of social media platform labeling strategies for moderating user-generated content. We examine how visual, textual, and user-interface elements have evolved as technology companies have attempted to inform users on misinformation, harmful content, and more.

Authors: Jessica Montgomery Polny, Graduate Researcher; Prof. John P. Wihbey, Ethics Institute/College of Arts, Media and Design

Last Updated: June 3, 2021

Reddit Inc. Overview

Reddit was founded in 2005 by Steve Huffman and Alexis Ohanian. It started as a host site for online forums, divided into different pages, or “subreddits.” The platform expanded to include private chats, voting and “currency” for users to boost their favorite content. Forum elements can include text, photos, gifs, videos, and external links within posts’ comment threads.

Reddit Inc. refers to the subreddits as “communities,” where users gather to share content and information. When it comes to moderating content, there are the employees of Reddit (admins of the platform) and the user-volunteers who review and implement the rules within their community (the moderators, or “mods.”) In 2014, the Reddit Blog published a comprehensive overview of platform functionality, “How reddit works,” with a focus on user engagement for learning and discovery.

Reddit published new content moderation policies in 2020, including an attempt to bolster tagging systems and to increase review of communities that violated the platform guidelines. The majority of moderation on the platform has been curated by the volunteer mods as opposed to the admins.

Reddit – Content Labeling

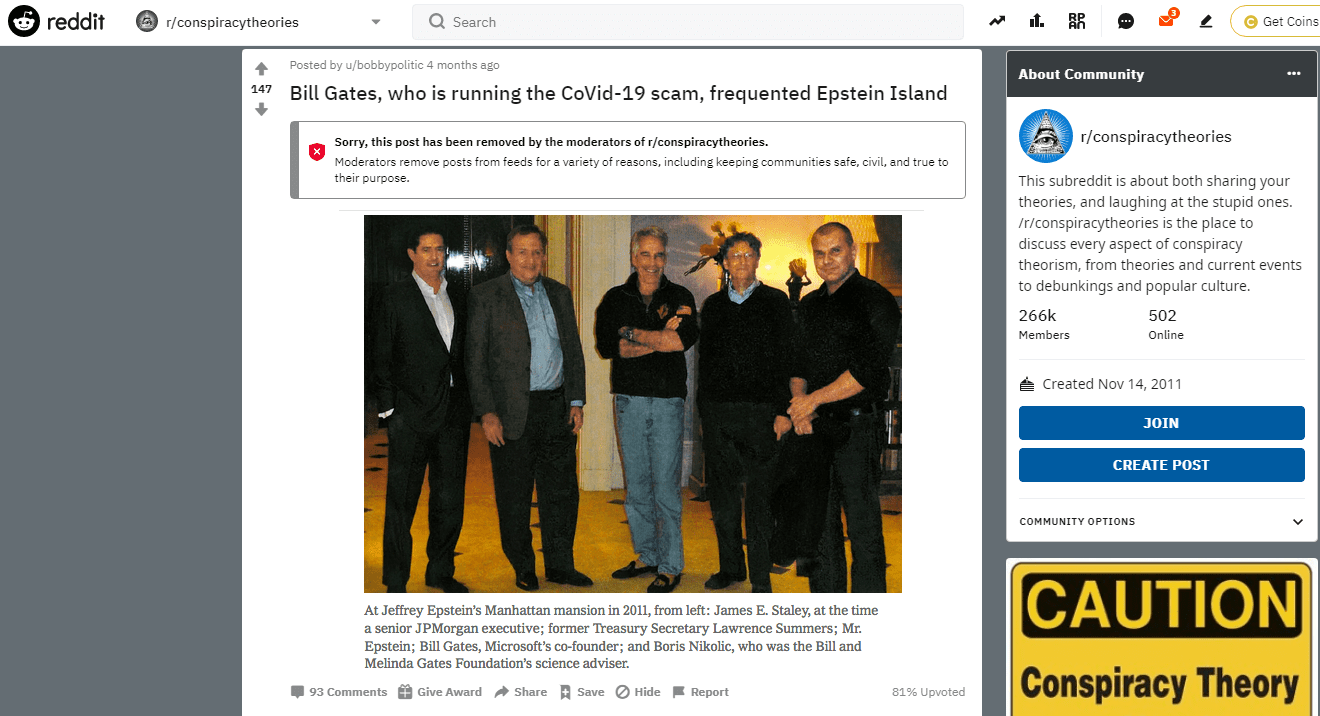

Reddit’s overall approach requires the responsibility of users and distributed moderators to consent to the rules and standards within each thread or subreddit, as opposed to implementing fact-checks by centralized moderators. Reddit’s content policy articulates a list of “prohibited” content removed by Reddit admins and “unwanted” content that can be restricted at the discretion of subreddit moderators for their communities before being subject to action by the platform.

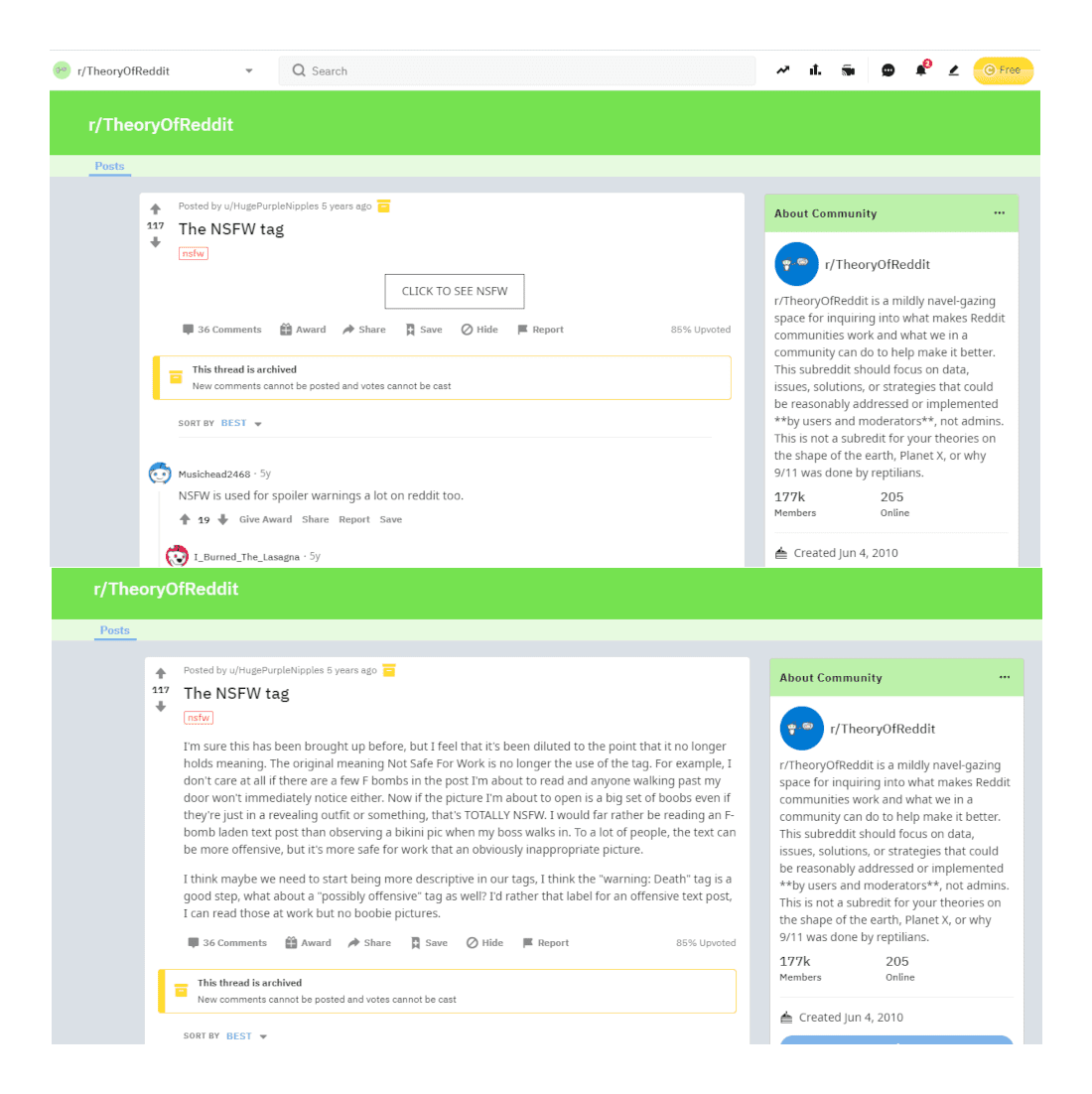

Reddit made its first move to enable content labeling utilizing its many volunteer community moderators in the summer of 2011. The NSFW tag, an acronym for “Not Safe for Work,” is meant to indicate sexually explicit or violent content. On Reddit, as a r/modnews post from 2011 explains, communities tagged with NSFW were hidden from view. The NSFW content would either be collapsed text in the old platform format, or have images and video hidden behind a blurred interstitial.

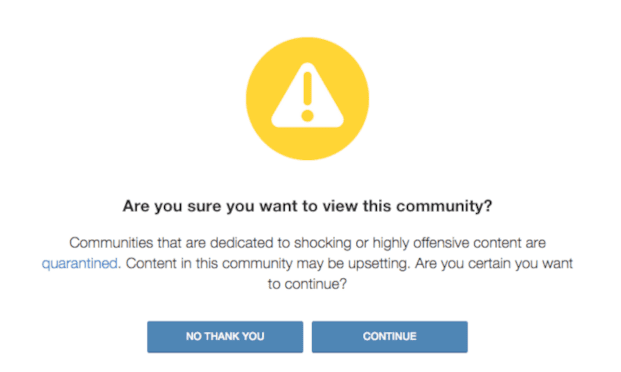

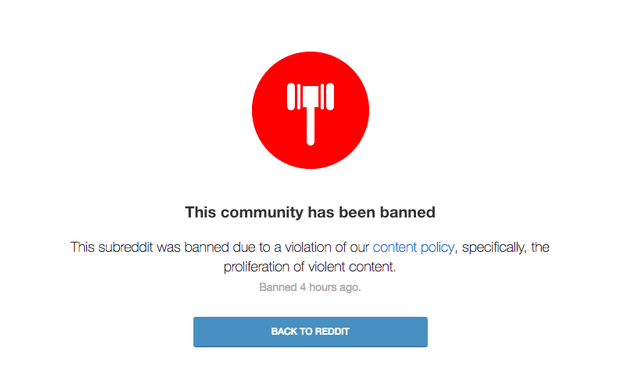

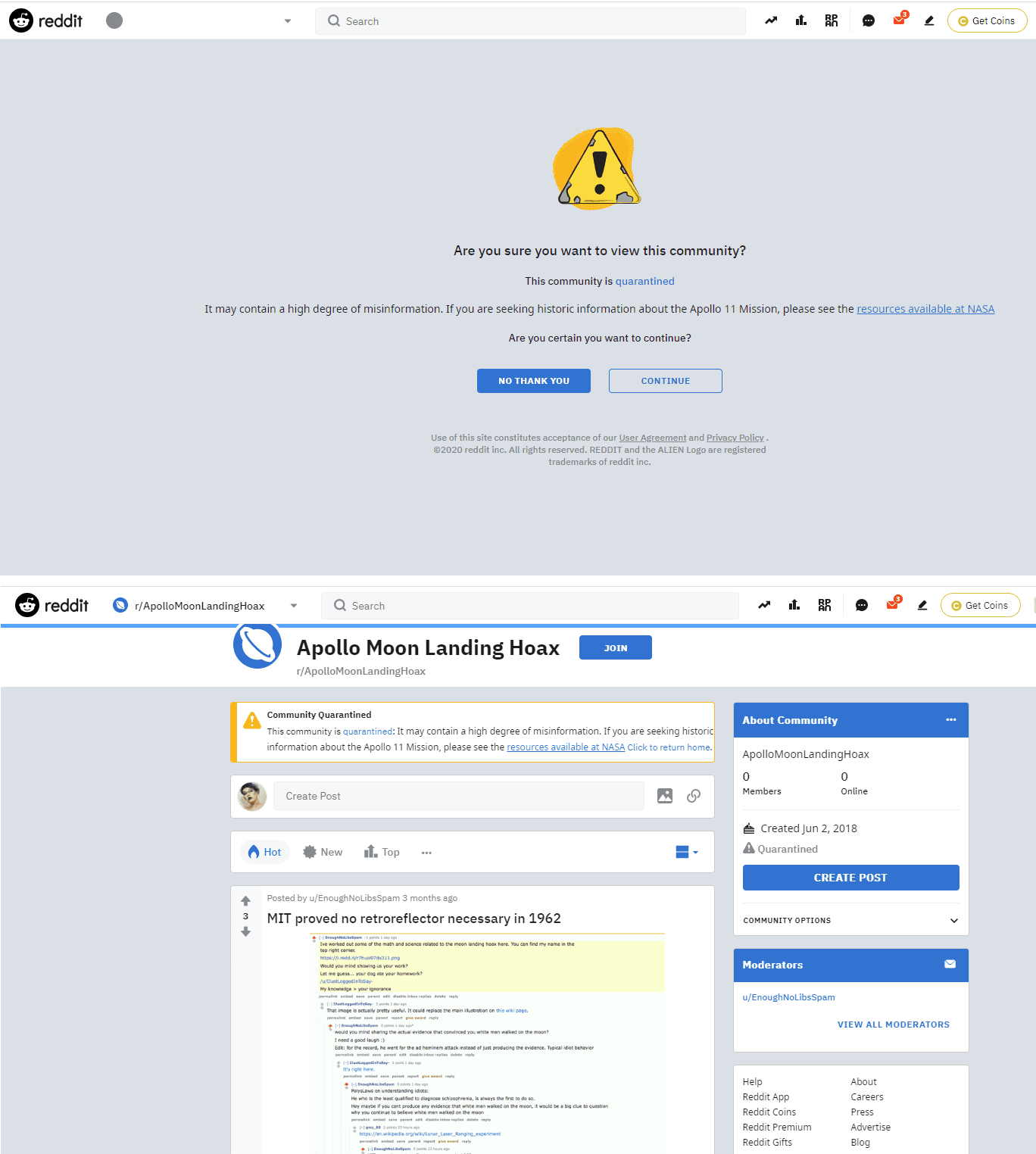

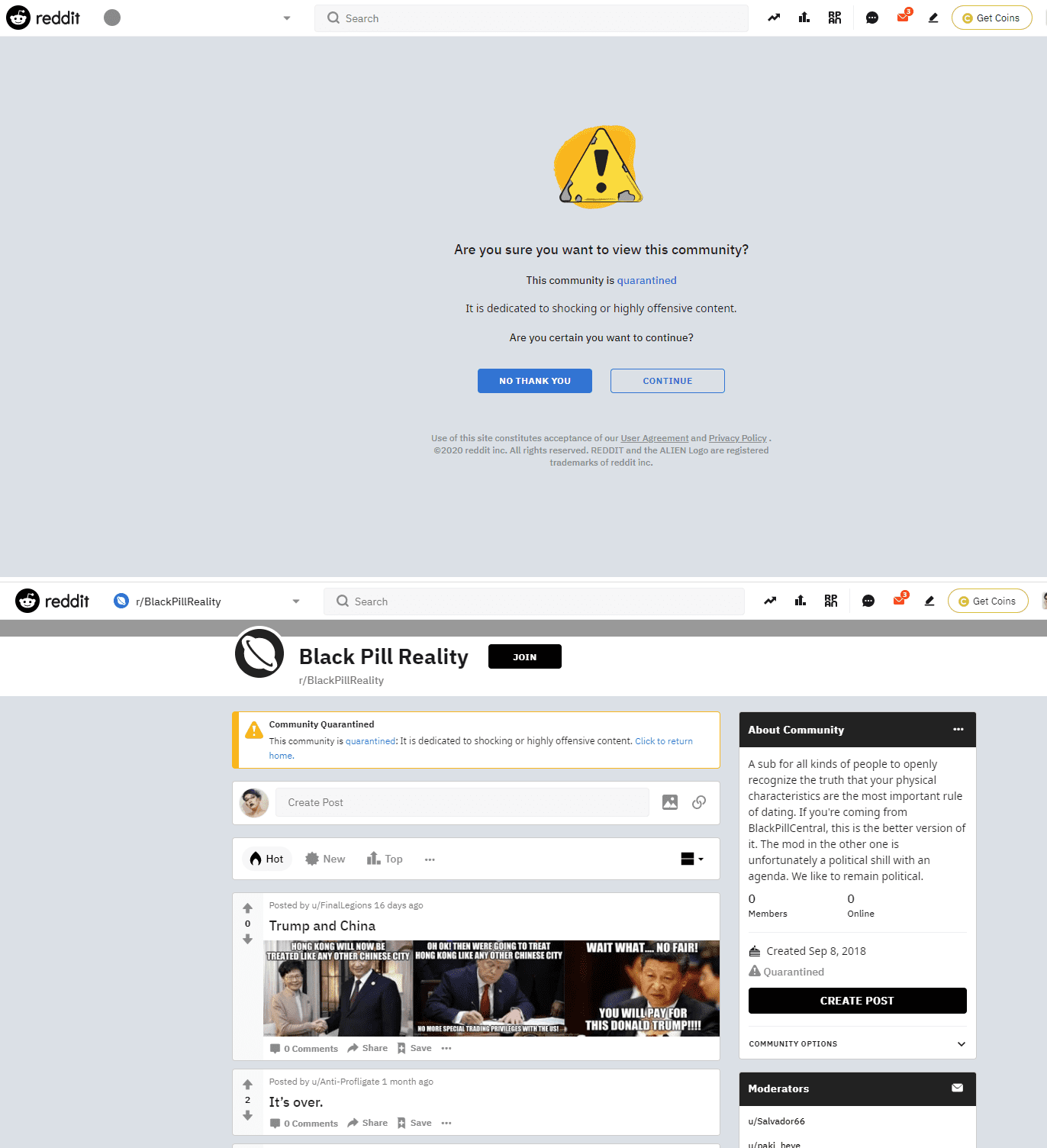

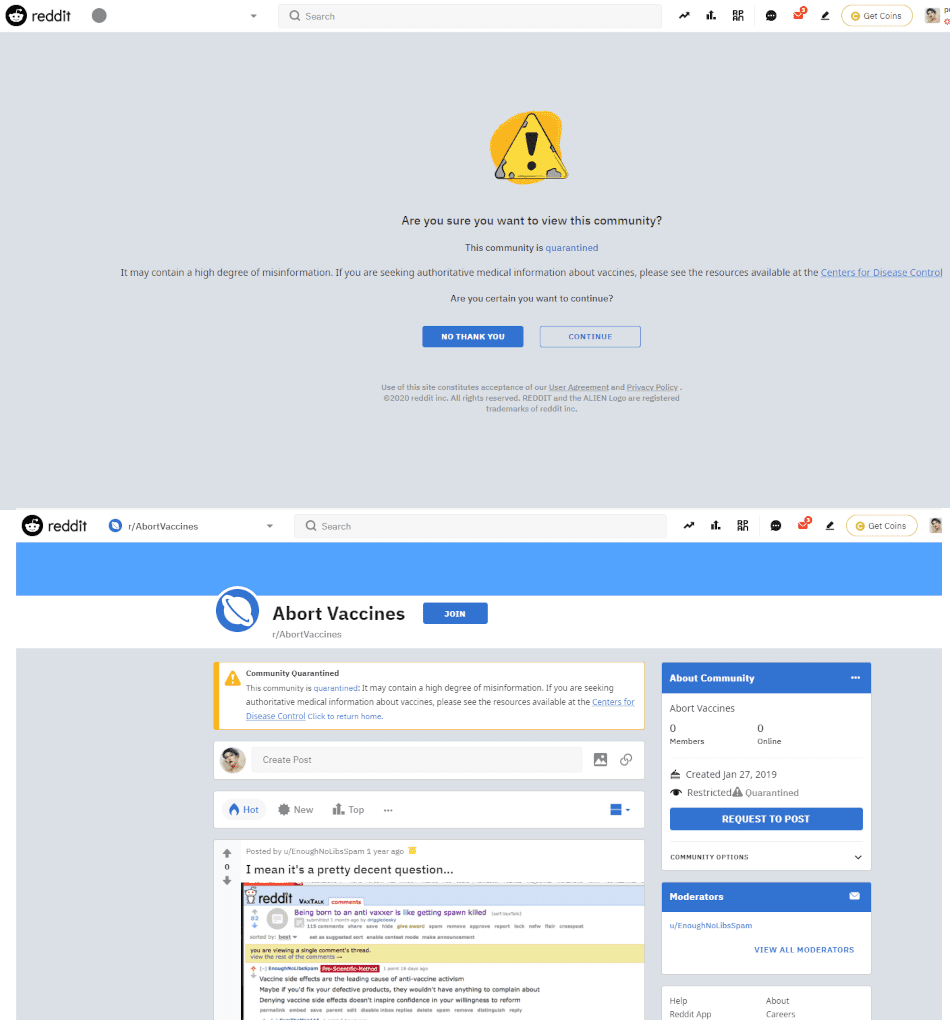

A notable content moderation shift by the Reddit platform took place in August of 2015, when its content policy update announced the implementation of Quarantines and updated policies for removals. Quarantines were presented as a type of subreddit labels, where certain communities cannot be accessed unless the user chooses to “explicitly opt-in” through a warning page. This warning page also provided the policy in question that warrants the quarantine, and a link to enter the community. The moderator thread continued to explain that removals were implemented on the basis of communities that “exist solely to annoy other redditors, prevent us from improving Reddit, and generally make Reddit worse for everyone else.”

Because Reddit Inc. employees do not moderate the forums, the platform implemented guidelines for volunteer users to moderate. These volunteers, referred to as “mods,” maintain the rules and functionality of each designated community. As per the April 2017 update, “Moderator Guidelines for Healthy Communities,” moderators were expected to maintain consistent rules for their community, as well as to bring their standards to the level of the platform-curated rules, the “Reddit Content Policy.” Mods have the authority to remove any content that is considered inappropriate to the community discussion or violating content policy, whether related to posts or comments in threads.

The platform’s policy contains eight essential rules, with the intention to allow moderators to select their rules and settings from a broad menu. Content policy guidelines require only “authentic” content be posted, and they prohibit “manipulative” content, in order to maintain a sense of “community and belonging” on Reddit. With regard to content labeling, the sixth rule states that communities must be “properly labeling content and communities, particularly content that is graphic, sexually-explicit, or offensive.” This implementation of content labeling falls under the direct responsibility of the volunteer moderators, who themselves have utilized and curated tagging systems for the subreddit.

Reddit Inc. posted an announcement in September of 2018, “Revamping the Quarantine Function,” with new updates for this warning mechanism. Additional categories subject to quarantine were presented that were not previously stated in platform guidelines. These categories include communities “dedicated to promoting hoaxes,” and host content that goes against facts that are “either verifiable or falsifiable and not seriously up for debate.” When a user would provide consent to enter the community, a banner at the top of the subreddit page reiterated quarantine policies. There was also an appeals process added, so that mods could inquire about how Reddit reviewed their community’s content and possibly have the quarantine removed.

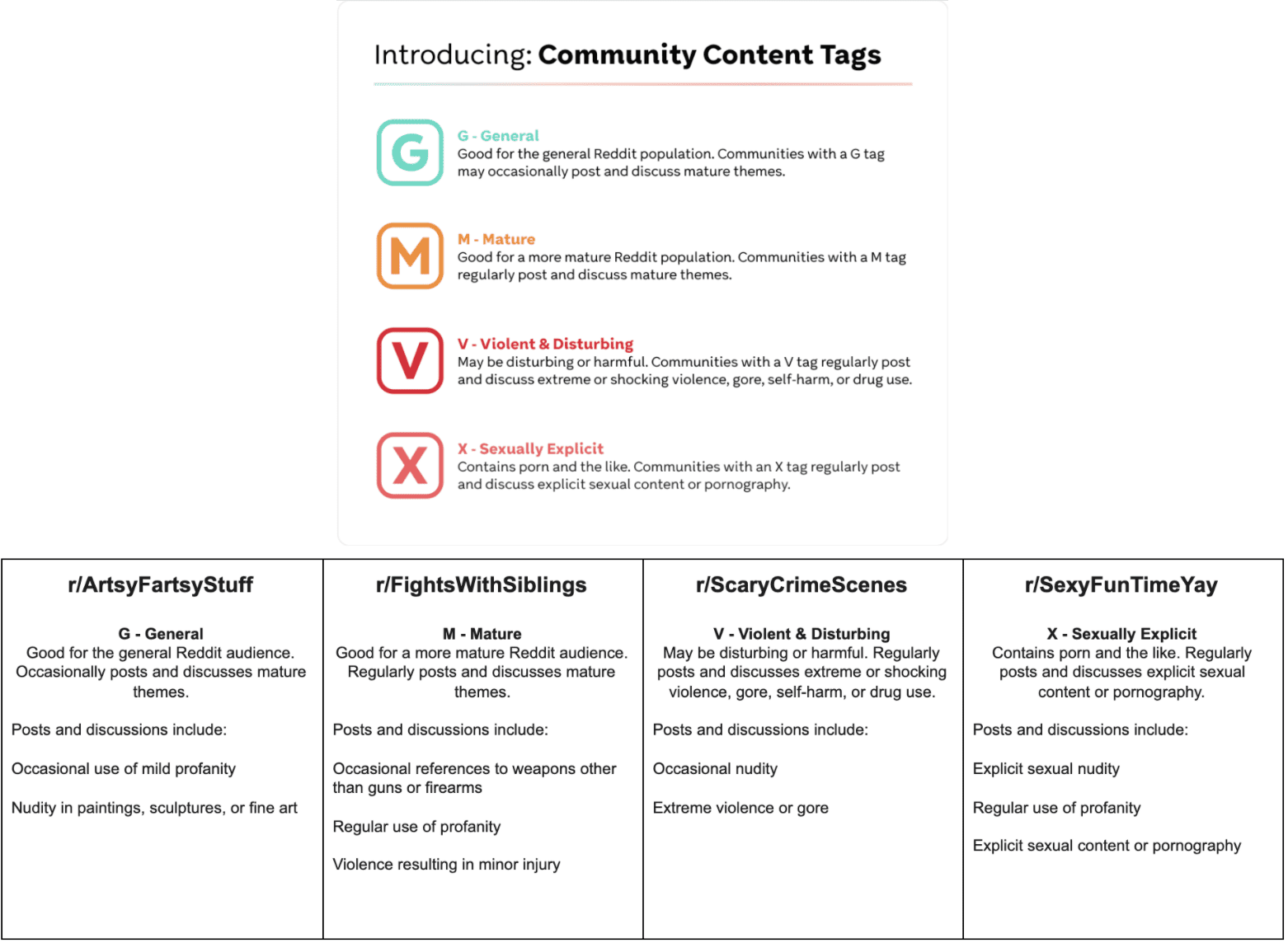

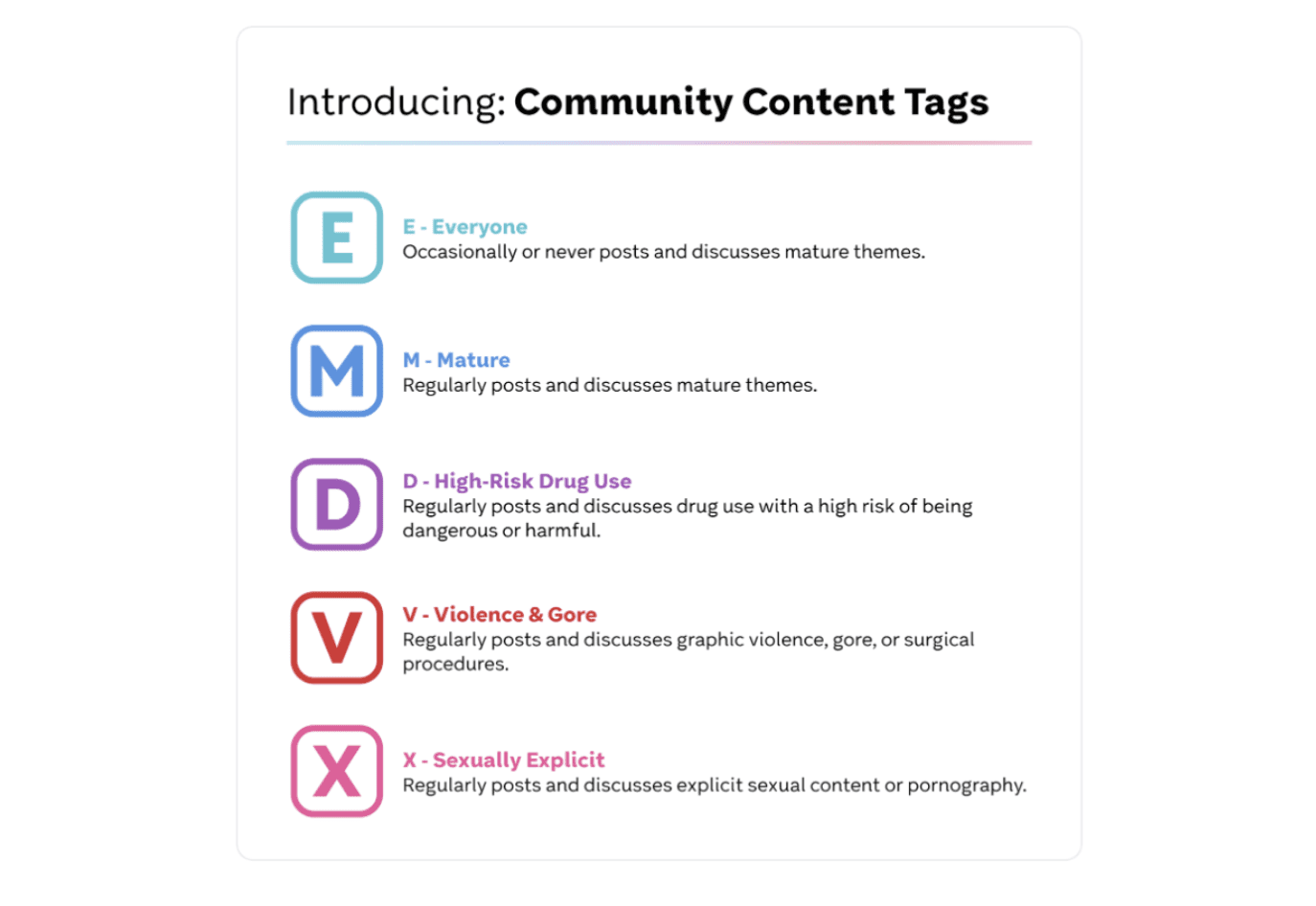

Reddit had first announced in July of 2020 about a new NSFW label system, and followed up with an announcement of the new labels in September of 2020 posted in the moderator’s subreddit. These labels would be applied to a community that frequently posts and discusses mature content within their subreddit. The community content tags at initial launch were General (G), Mature (M), Violent and Disturbing (V), and Sexually Explicit (X). The announcement included tables that explain each designation.

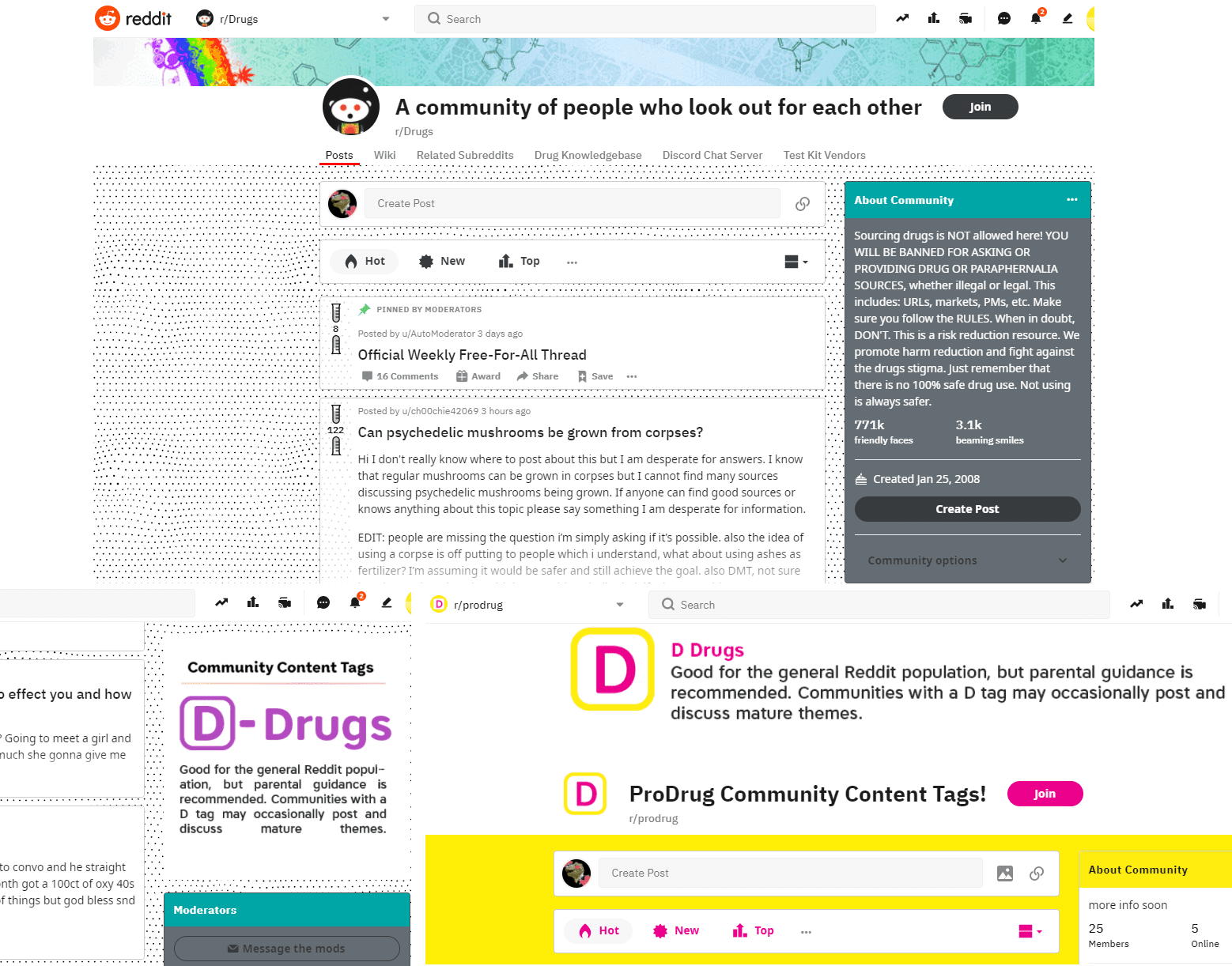

But shortly after, in December of 2020, Reddit published a post on the moderator news page with new adjustments to the community content tags, as an attempt to provide more accurate and clarifying content indicators. The tags expanded to Everyone (E), Mature (M), High-Risk Drug Use (D), Violence and Gore (V), and Sexually Explicit (X). The update also included clarification of content criteria terms, such as “profanity” and “alcohol, tobacco and drug use.” The Reddit Help page for community content tags explains how moderators can acquire tags by Reddit-curated survey on their content, and that the content tags would only be accessible to a number of trial communities.

Although the community content tags had not been available outside of a limited trial, there were some communities that displayed support for the new content tag system, and furthermore implemented the label apart from platform procedure. The subreddit /r/Drugs/ chose to display the community tag for drug use, as well as utilize a tag system to indicate topics of drug discussion in their community.

Reddit – U.S. 2020 Elections

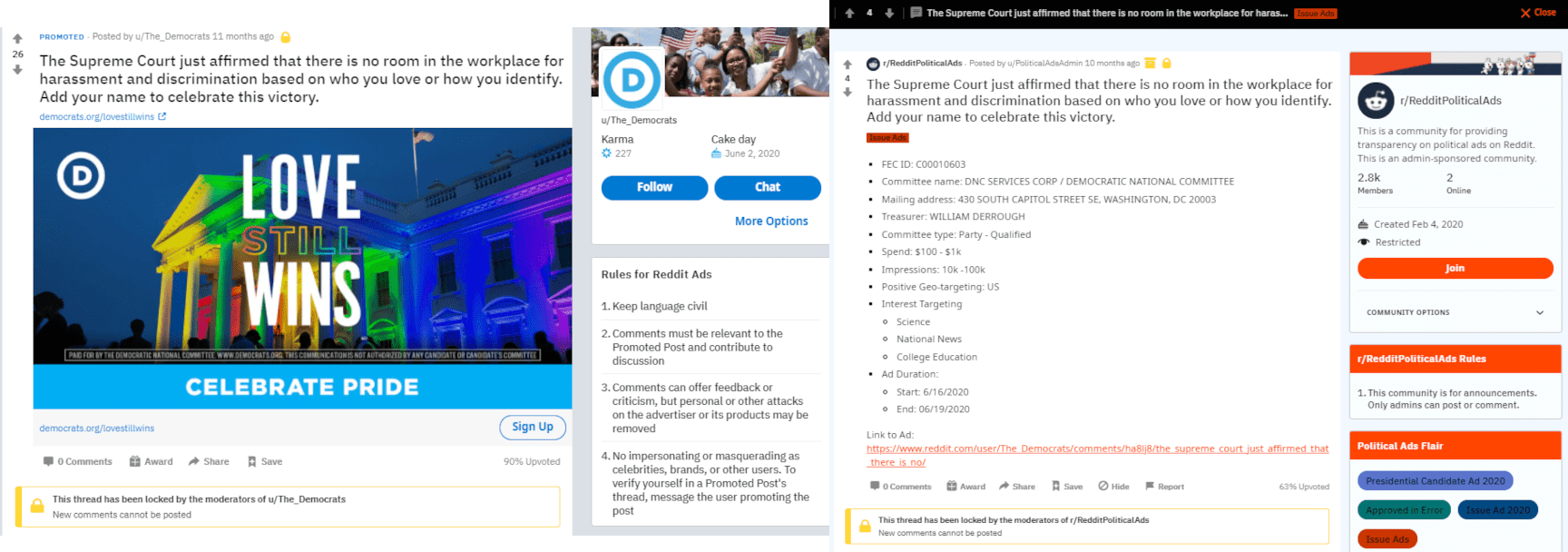

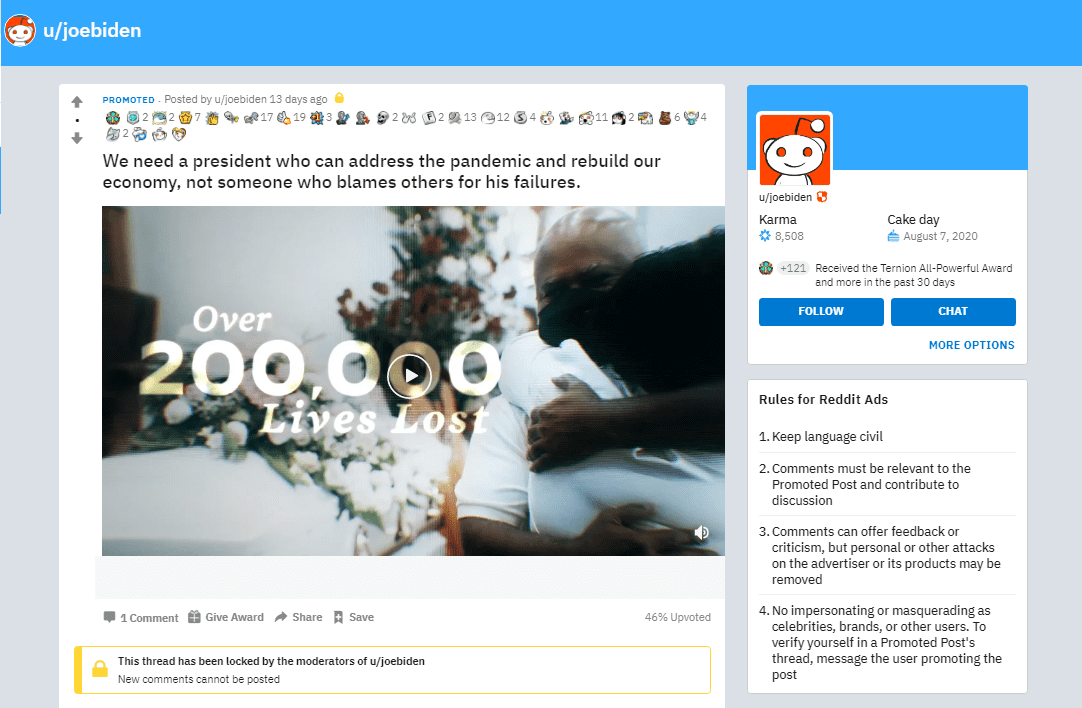

For the upcoming presidential elections in the United States, in April of 2020 Reddit published an announcement, “Changes to Reddit’s Political Ads Policy.” Advertisements had always been reviewed on the platform, and those that contain “deceptive, untrue, or misleading” content would be rejected and removed. Political advertisements would be subject to the same criteria, and they would also be required to contain a visible “paid for by” disclosure. However, this disclosure was only available on the transparency page that was added with this update, /r/RedditPoliticalAds/. This subreddit would act as a political advertising archive dating back to January 2019 and would tag ads by topics such as “presidential campaign” or political “issue.”

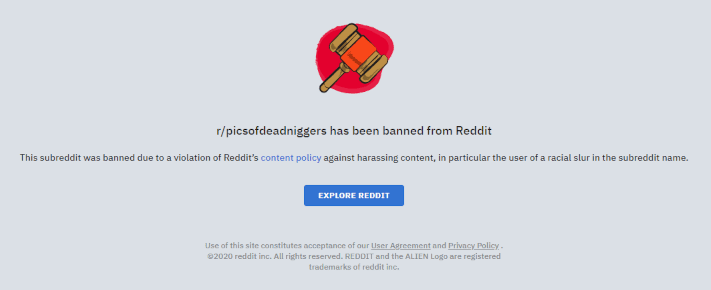

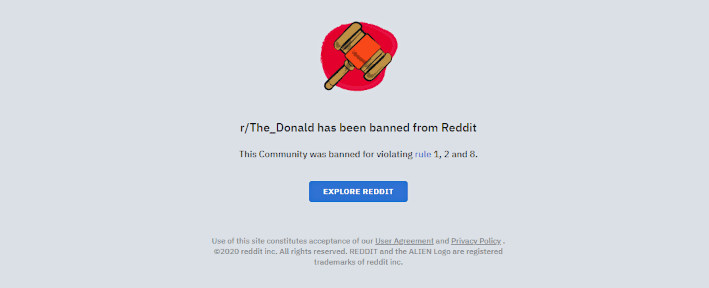

In June of 2020, Reddit published an announcement, “Update to Our Content Policy,” with numerous changes to its content moderation, as well as platform values and administration. This was in response to the political and social issues ongoing in the United States. The announcement stated the following changes to Reddit’s content policy: First, “Rule 1 explicitly states that communities and users that promote hate based on identity or vulnerability will be banned.” Second, “Rule 2 ties together our previous rules on prohibited behavior with an ask to abide by community rules and post with authentic, personal interest.” This update focused primarily on the removal of content, as Reddit would ban any communities that violated the platform rules, especially those regarding hate speech. Under this policy update, the platform began banning more than 2000 subreddits. This included the politically viral pro-Trump community r/The_Donald, which the platform asserted incited hate and promoted harmful conspiracies that went against the updated content policy.

Reddit announced in September 2020 that it would be administering a trial update to community discussion on political ads, in an attempt to encourage discussion regarding the upcoming U.S. presidential elections. Rather than commenting on ads directly, where the original poster could moderate discussion with their political agenda, political ads would have the option to be forwarded into a subreddit and have comments within the community. So while the advertisement itself was subject to internal review by Reddit, the comment threads and the choice to include political ads in communities was under the designation of the mods.

Reddit – COVID-19

When COVID-19 became a viral discussion topic on Reddit, the platform responded by increasing verifiable information on the platform in tandem with quarantines. The company blog published “Expert Conversation on Coronavirus” on March 2, 2020. First, Reddit announced multiple dates for “Ask Me Anything” open forum discussions with experts for COVID-19 insight, both political officials and health professionals. The AMA discussions were a text-forum-based event hosted within a Reddit community, which was overseen by the mods as the experts responded to comments and questions in the designated event thread.

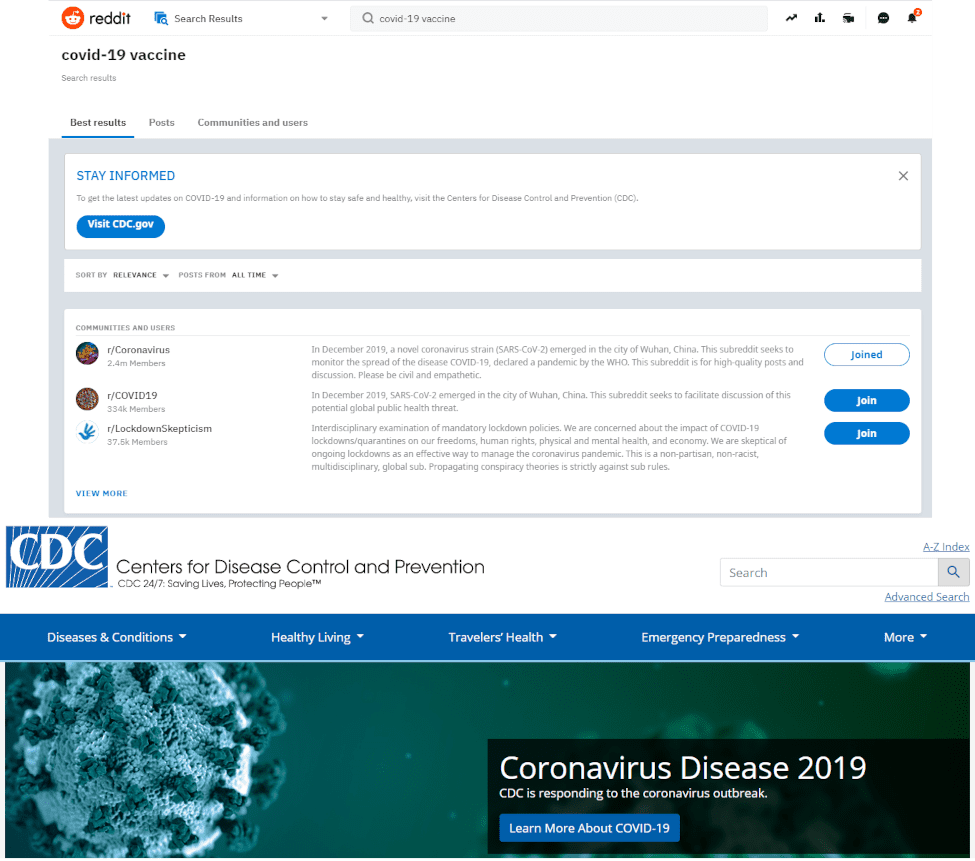

Reddit also stated in a March 2020 blog post that the platform would add labels to related search topics on COVID-19. Above the search results was an information banner to direct users to the CDC website for authoritative information. A limited number of promoted pages would also display the information banner. The implementation of quarantines against misinformation or hoaxes was also reiterated by the platform, as the same criteria would apply to COVID-19 content and community standards.

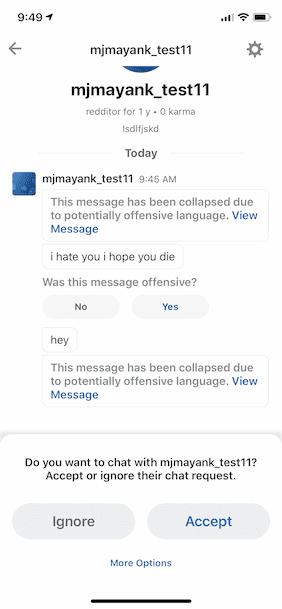

In April of 2020, Reddit announced on its blog the “Start Chatting” feature, which matched users to community group chats through the platform’s pre-existing messaging function. According to Reddit, stay-at-home restrictions for COVID-19 had increased chat volume on the platform, and so the pandemic was the main impetus for updating chat functions and moderation. Content labeling for the chats was updated later in the year, with a moderator news post in September, “Some Chat Safety Updates.” Contents of a chat were reviewed by automation, and if a piece of content or text was flagged as harassment or hate speech, the message would be hidden behind a panel. In tandem with increased spam review by the platform, reports of “offensive” content as indicated by these panels would provide moderators with information on their community members to better implement rules and bans.

Reddit – Research Observations

Reddit Inc. does not have a centralized strategy for content labeling for misinformation, but instead has built functions and policies to support the mods’ enforcement of content rules within communities. The responsibility of content moderation on Reddit was mostly deferred to subreddit mods, the volunteer-based discussion board leaders and rule enforcers. Violations regarding content guidelines were variable, and possibly arbitrary, depending on the decisions of the moderators and the user’s responsibility to tag and report content. As Reddit stated in the 2019 content policy version, the platform “provides tools to aid moderators, but does not prescribe their usage.”

Quarantines were the most apparent form of content labeling moderation implemented by the platform. Reddit stated in a 2018 update on quarantines that the “purpose of quarantining a community is to prevent its content from being accidentally viewed by those who do not knowingly wish to do so, or viewed without appropriate context.” This same announcement also provided a platform report on how quarantines had affected communities since initial implementation. Reddit Inc. said that “quarantining a community may have a positive effect on the behavior of its subscribers by publicly signaling that there is a problem. This both forces subscribers to reconsider their behavior and incentivizes moderators to make changes.”

Where quarantines are applied, it would seem that there is a high level of friction against viewers incidentally consuming harmful or offensive content. Users are required to click through the web page and confirm their consent; they are explicitly provided with the platform policy in question. However, the discretion of implementing quarantines on Reddit was confusing where criteria of prohibited content (inciting harassment, graphic or violent content) intersect with criteria elements subject to Quarantine (harmful, or contains misinformation). There is no clear evaluation from the platform regarding when a discussion should receive a Quarantine or further action, and most of the responsibility for maintaining content policy in subreddits is left to the moderators.

Furthermore, a user’s browser history remembered which quarantines have been clicked through and consented to, and did not display the quarantine page unless the user is in incognito mode or logged onto a different server. Users would not be repeatedly subject to the friction against such content in these communities, and the apparent danger of the quarantined community was lessened.

Although Reddit has promoted healthy discussion in response to real-world events, there is little change directly in policy regarding such issues. There is no criteria specifically regarding COVID-19 content, as Reddit does not conduct fact-checks and has minimal evaluation criteria for general content. Despite there being many pages and guests associated with the public forums on COVID-19 promoted by Reddit, known as “Ask Me Anything” events, there is no formal criteria provided for the evaluation of “appropriate resources and authoritative content” in discussion. Communities who violated the policy on information resources for COVID-19 were subject to quarantine.

As for the 2020 U.S. presidential elections, the primary reaction of Reddit Inc. was an increase of content removal. This focused on the removal of hate speech from the platform, based on the number of community violations. There was some attempted promotion of healthy discussions by allowing comments in political ads to be linked directly to subreddits, for more relevant moderation by communities and limiting the moderation by the original poster. However, the content rules were only subject to review by community mods, and they were more difficult to moderate under platform rules for hate and misinformation.

Where volunteer mods are responsible for content within a community, the Reddit platform focuses its moderation on how subreddits have an effect on the platform overall. This was effective in disrupting large communities of disinformation, violence, and hate. However, there has been less focus on the moderation of individual users by the platform. While r/The_Donald may have been removed for promoting extremism and hate, the users of that subreddit can continue to engage in other communities. A quarantine may also protect users from content they consider harmful or offensive, but it is a two-way insular solution. The members of a quarantined community would not provide much friction within a community where harmful misinformation may be considered acceptable.