By John Wihbey, Assistant Professor of Journalism and New Media, Northeastern University

Note: John Wihbey’s research is partially supported by a grant from the NULab for Digital Humanities and Computational Social Science.

The year 2017 will certainly go down as one of more vertiginous, and let’s just say it, outright crazy moments in modern American media history. It was the year that journalism itself — normally ignored as an institution by most of the public — became a central topic of conversation, in no small part because of President Trump’s highly critical tweets about news media and the continually surprising, and often-amazing, stream of reporting that emanated from the nation’s capital.

It was also a fascinating year in which to be studying journalism and journalists, which, thanks in part to two NULab travel grants, I was able to do. With these grants, I presented conference papers at the International Symposium on Online Journalism in Austin, Texas, and at the KDD Data Science + Journalism workshop in Halifax, Nova Scotia (part of the 23rd SIGKDD Conference on Knowledge Discovery and Data Mining.)

The latter paper was the product of an interdisciplinary effort with NULab co-director David Lazer and his Lab team, as I’ll detail below.

Knowing the numbers

My work early in 2017 centered around questions relating to the training, preparation, and capacity of journalists. How well prepared are journalists to report on complex issues in our increasingly data-driven society? What is their familiarity with statistics, data, and research? And what about the educators, like myself, who train young journalists? Are we ready to impart the skills and knowledge necessary to deal with a complex world?

With my co-author Mark Coddington, an assistant professor at Washington and Lee University, I set out to answer these questions using original survey data that I had collected from more than 1,000 working journalists and 400 journalism educators. We asked respondents’ opinions about the value of statistics, data, and research in the practice of journalism, and about their own personal skill levels. Perhaps surprisingly, we found journalists to be quite positive about the power of academic research and its importance to journalism — more positive on average than the journalism educators. We found both groups to be about equally likely to see the importance of statistical efficacy in journalism. And the ability to utilize statistics was not highly correlated with degree attainment — there were plenty of non-Ph.D.’s with data skills. Overall, it was a mixed picture, with journalists and educators acknowledging the importance of data skills and research, but candidly owning up to their own deficiencies in those areas.

Data journalism is a big part of the curriculum and research agenda we’re focusing on at Northeastern. But while it’s a growing field in news media, with some of the best reporters and editors now doing work that looks more than a little like social science, it’s clear that the field as a whole has a long way to go.

In any case, the study, “Knowing the Numbers: Assessing Attitudes among Journalists and Educators about Using and Interpreting Data, Statistics, and Research,” won best research paper at the conference. It was published in the #ISOJ Journal.

Journalistic filter bubbles

Later on in 2017, I worked on another structural issue related to media practice. I was aided by another NULab Grant for a second paper in which I attempted to look at the “filter bubble” problem from a novel angle. The issue of online homophily — birds of a feather flocking together in social media echo chambers or siloes — is typically discussed as an issue affecting average individuals. But perhaps we should be even more worried about elites such as journalists, who help set the agenda for what is important. My co-authors — post-doc Kenny Joseph and Thalita Coleman, of the Network Science Institute, and Prof. Lazer — and I decided to look at the degree of ideological segregation among journalists on Twitter. Further, we wanted to see if there was a correlation with the ideological leaning of the published output of the journalists in question.

Our research design specified that we select a group of journalists participating on the platform, analyze the persons they followed and code them for liberal-conservative ideological leaning, and then apply another coding system to the ideology of the stories that they published. In other words, we constructed measures of journalists’ ideology based on who they follow on Twitter and the news articles they write. We then looked at the strength of ideological correlation using a regression analysis. Of course, we recognize that these measures can only serve as proxies for the actual ideological leaning of journalists and their stories. The analysis was based on 500,000 news articles produced by 1,000 journalists at 25 different news outlets.

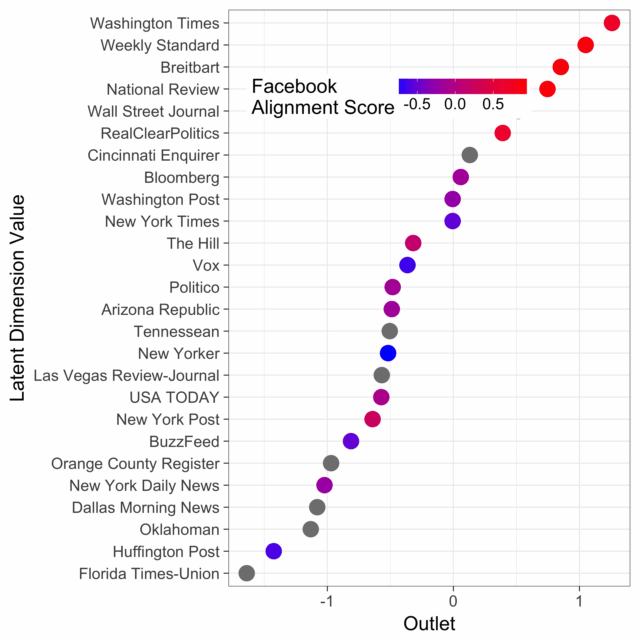

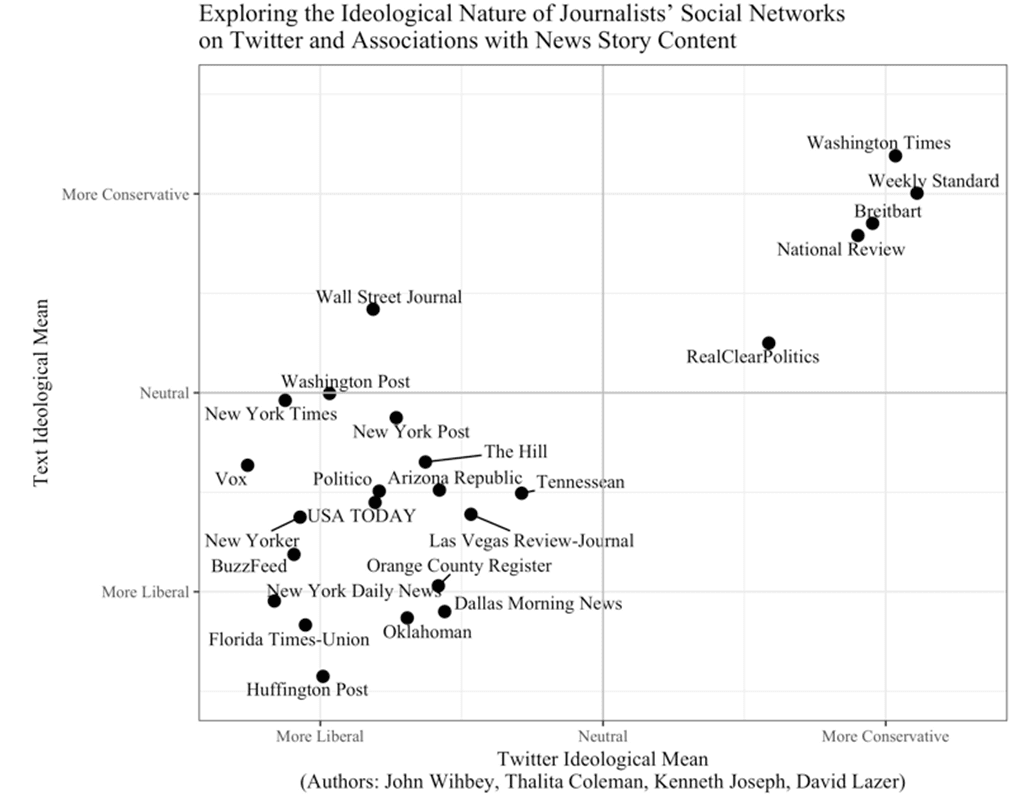

Our research found that there was indeed a reasonably strong relationship between the kinds of people who journalists follow in general and the kind of articles they write, as you can see from this graphic we produced:

I presented these results on behalf of the research team in a KDD 2017 conference working paper, “Exploring the Ideological Nature of Journalists’ Social Networks on Twitter and Associations with News Story Content.” The working paper itself got some press coverage from the likes of Columbia Journalism Review and Axios, among others. (We are continuing to refine it for journal publication.)

Here’s a bit more on our methods, for which Kenny Joseph deserves much of the credit:

Using the accounts of members of Congress as well as a group of 12,000 accounts of people who can be verified as politically active (both through voter registration rolls and by accounts of officeholders they follow), we trained a model to help us produce a score for the ideology of a given journalist’s Twitter network.

Immediately, we could see some validation of this model. Journalists with the most heavily right-leaning followerships were at traditionally right-leaning outlets such as the Washington Times, Breitbart and The Hill. Similarly, journalists following the most left-leaning accounts tended to be from left-leaning outlets. However, there were interesting exceptions. For example, among the journalists following the most right-leaning accounts were a few stray journalists at Politico, the New York Times, and the Washington Post. Why? It turns out that some of these individuals have beats such as covering the Trump administration or the Republican-led Congress. This points to a weakness in the method for special cases where a journalist’s very focused beat may require him or her to quote specific kinds of phrases often voiced by the office holders and policymakers. However, what this potential weakness doesn’t invalidate is the general pattern, pointing to the potential of a journalistic homophily.

In terms of scoring the ideological leanings of the stories, we leveraged the corpus of 150,000 congressional public statements from VoteSmart, and after scoring all terms, we extracted the top 100 most left-leaning (negative) and right-leaning (positive) terms. Examples of the terms were “lgbt,” “equal pay,” and “voting rights act” for left-leaning persons, and “bureaucrats,” “illegal immigrants,” and “sponsor of terrorism” for right-leaning persons. After further reducing these down to 50 for each political side, we scored journalists’ aggregate stories for ideological leaning based on the usage of such terms. It is, admittedly, a limited measure, but our testing it against the collective stories of various outlets — for example, Huffington Post and Vox on the left, Breitbart and National Review on the right — suggested that the method had validity.

This kind of research around the connection between behavior and influence in online networks, and their implications for the flow of public information, is all the more important in an era filled with rampant claims of media bias. If we are going to have this argument at full volume for the foreseeable future, we should take a more scientific approach to these issues.