The Digital Scholarship Group and NULab for Texts, Maps, and Networks at Northeastern University hosted a virtual event showcasing the diverse array of digital projects and research being carried out at Northeastern. As a part of the Digital Humanities Open Office Hours series, the DH Lightning Talks featured presentations from Dr. Brian Ball (Philosophy, NU London), Tieanna Graphenreed (English, NU Boston), Robin Lange (Network Science, NU Boston), and Sean Rogers (NULab Assistant Director).

The Lightning Talks were kicked off by Dr. Brian Ball, Associate Professor in Northeastern University London’s Department of Philosophy. He presented on the Simulating Epistemic Injustice project, which is a part of the PolyGraphs project (link to Dr. Ball’s presentation). The Simulating Epistemic Injustice project looks at the influences of social network structures and information consumption strategies on public opinion by developing and analyzing simulations of information processing over social networks. Dr. Ball’s research team develops computer simulations which model digital communities as networks of agents investigating a hypothesis, learning from observations, learning from their network neighbors, and communicating relevant evidence with their network. The models make a number of idealized assumptions about how agents investigate a hypothesis and identify factually true, or false, information: Dr. Ball compared the simulation to the results of a series of coin tosses, with each toss determining whether there is a bias toward heads, or tails. The project’s models show that in general, agents hold more trust of those with aligned views, and this increase in trust leads to more knowledge. The idealized models show how the presence of misreported information from agents (i.e., misinformants) and intentional disinformation (disinformants) affects information processing and subsequent beliefs. In addition, they identify different pathways of information processing: agents can use a gullible information processing strategy, in which agents’ trust is not aligned with the trustworthiness of the information, or a skeptical strategy, in which agents’ level of trust matches with the reliability of the information. In the presence of misinformation, the skeptical information processing strategy is more likely to lead to agents’ having a correct consensus than the gullible strategy.

This research supports simulations of epistemic injustice. Epistemic injustices take on many forms, for example, when police authorities do not trust information provided by witnesses from certain populations. The models used by Dr. Ball’s team can simulate testimonial injustices, and can model these injustices as either having a deficit or excess of credibility. The project’s aim is to look at the consequences of epistemic injustices on community opinions. One question from the audience asked if the project is looking at running other simulations to capture additional features of epistemic injustices on social media sites, and noted the current limitations of using X (formerly Twitter) data. Dr. Ball reports they use demographic data to understand how social groups and different identities can be accurately represented in their simulations, but the limited access to data has slowed their project. The project is hoping to find collaborators to run additional simulations of epistemic injustices and help plot their analyses in Python. If interested, please reach out to Dr. Ball.

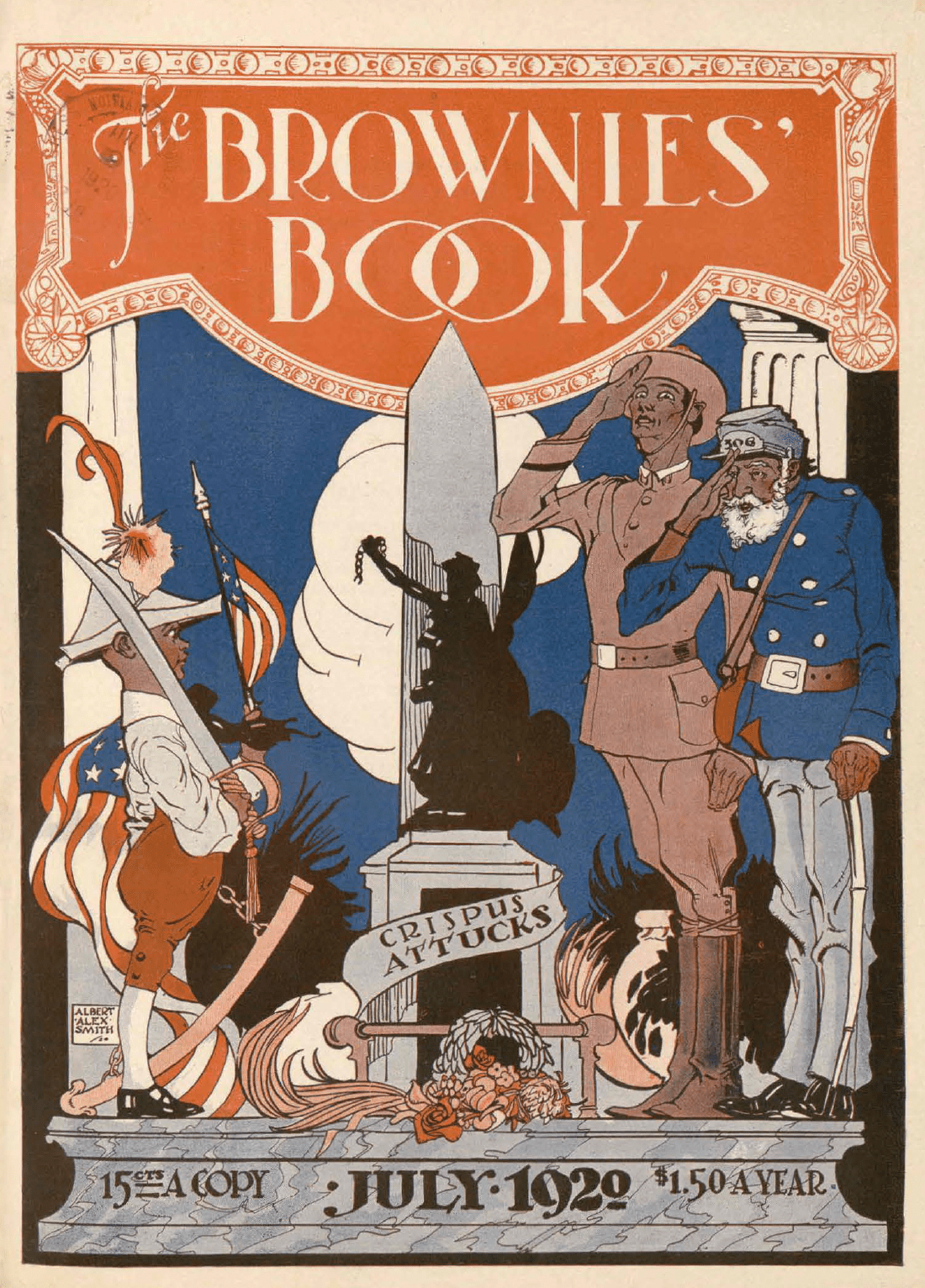

Tieanna Graphenreed, a Ph.D. candidate in the Department of English, presented their research on the Brownies’ Book, the first magazine designed for African-American children in the US. The magazine features stories, photos, songs, and more from leading African-American thinkers in the Boston area. Tieanna’s research involves digitally scraping and collecting text from issues of the Brownies’ Book and mapping the circulation and content of the Brownies’ Book. Boston is an interesting geography for this study because it is a city known for its history of democracy and liberation, yet the city has had a long history of tension against the struggles of Black Bostonians to gain equal rights. The Brownies’ Book text collected by Tieanna highlights this tension and provides an opportunity to consider what educational role the stories within the Brownies’ Book had, how children in Boston experienced the Brownies’ Book, and what kind of civic lives the readers of the Brownies’ Book had in the early 20th century.

During the Lightning Talks, Tieanna provided a live demo of the map she is creating, showing a glimpse of the important figures and Boston-associated materials highlighted in the Brownies’ Book. The map will have interactive features, allowing users to explore the physical locations of important figures and related narratives. This map will be used by Boston public school teachers to expand the BPS curriculum and support the Early Black Boston Digital Almanac. One question from the audience for Tieanna’s project asked if Tieanna is seeing any spatial trends while mapping the important materials and figures of the Brownies’ Book. Tieanna reported the map has clusters of the schools mentioned in letters from children to the Brownies’ Book, as well as more disparate clusters of neighborhoods, historic landmarks, and important figures featured in the Book. Tieanna added that in mapping the circulation of the Brownie Book, they can see that the Brownies’ Book readership concentrated in the US East coast, with disparate readers in France, Cuba, and some circulation to the US West coast. The map of the Brownies’ Book will be launched soon with the Norman B. Levanthal Map Center at the Boston Public Library.

Robin Lange, a Ph.D. student from the Network Science Department, presented on their Paradox of Hate Speech project, which analyzes patterns of moral disengagement used by hate speech victims. Hate speech is a prevalent problem in digital spaces such as on social media platforms and websites, as well as in physical spaces. Evidence shows that hate speech victims face many negative, long-lasting mental health impacts. In addition, past research has shown that hate speech victims show a trend of normalizing hate speech behavior. Robin’s research considers if and how moral disengagement techniques are used by hate speech victims to normalize the actions of perpetrators. Robin has interviewed several participants about their experiences, taking care to make sure interviewing does not exacerbate the harms already experienced by participants. From these interviews, and their past research on trends in hate speech victimizations, Robin identified several mechanisms used by participants to normalize the hate speech behavior of perpetrators. These mechanisms include victims changing their behavior in an attempt to minimize hate speech and its negative impacts, victims dehumanizing their own experiences, and victims justifying the hate speech by attributing blame to themselves.

For future research, Robin plans to see if these trends show up across other hate speech victims, and they are in process of recruiting more participants to obtain a larger study sample. Their preliminary results show that victims use at least one moral disengagement strategy, use contradictions, have varied thought processes, and that victims are very impacted by hate speech even when they deploy moral disengagement strategies. One question from the audience asked Robin how their project defines hate speech. Robin clarified that hate speech supports the dominant culture and maintains the status quo of oppression, such that deciding the definition of hate speech, and identifying the victim of hate speech, looks to the most socially-vulnerable person in the interaction.

Finally, Sean Rogers, Assistant Director of the NULab and Ph.D. student at the University of Vermont, presented on their work on using machine learning and natural language processing to quantify the prevalence of behavioral health components in police incidence response narratives from K-12 schools. He emphasized that for policymakers to divert police resources and create programs more tailored to support behavioral health of students, we need to know how often and why these behavioral health reports occur. The data within police and school reports can be collected digitally and allow researchers to detect behavioral health signals with machine learning. However, Sean makes it clear these reports contain biases and do not reflect behavioral health signals as if they were identified by a health professional.

The project involved processing the narrative components of police response reports, for example, by removing stopwords and fixing punctuation, in order to make the reports more readable to the machine. The machine model assigns tokens to the identified topics to find how often certain topics appear in these police response reports. The model’s metrics of precision and recall were high and confirmed the model is appropriately allotting tokens to topics. One question from the audience asked Sean why he thinks the model performs so well: Sean relayed how the model initially failed with a lack of data, and that the model improved with the addition of more report data. The project aims to expand the model to look at behavioral health instances across different school years, and pinpoint topics of behavioral health issues within police and school reports. This information can help improve how policymakers allot resources to Boston schools and police to support students.

The suite of DH Lightning Talks highlight the incredible digital humanities and computational social science research projects underway at Northeastern University. For those interested in attending future DH events at Northeastern University, please follow this link to the NULab events page.